When people talk about storage in crypto, the conversation often stays very abstract. We hear words like “decentralized,” “onchain,” or “permanent,” but we rarely stop to think about what is actually being stored and how it is handled once it enters a network. Walrus starts from a much simpler and more honest place. Instead of trying to look like a file system or a cloud drive, it treats data for what it usually is in modern applications: large chunks of bytes that need to be kept available. These chunks are called blobs.

A blob is not a file in the usual sense. It does not care about folders, names, or formats. It is just a piece of data, often large, that an application wants to store and retrieve later. This could be a video for an NFT, a batch of rollup data, a dataset for an AI model, or an archive of historical records. In most systems, these things are still handled as if they were normal files, even though the system does not really need to understand what is inside them. It only needs to make sure they can be stored, found again, and reconstructed if something goes wrong.

Walrus is built around this idea. It does not try to be a general-purpose file system. It does not try to manage directories or user-friendly paths. It focuses on blobs because that is what most decentralized applications actually need. They need a place where large pieces of data can live reliably, without depending on a single server or a single company, and without wasting huge amounts of space on copies.

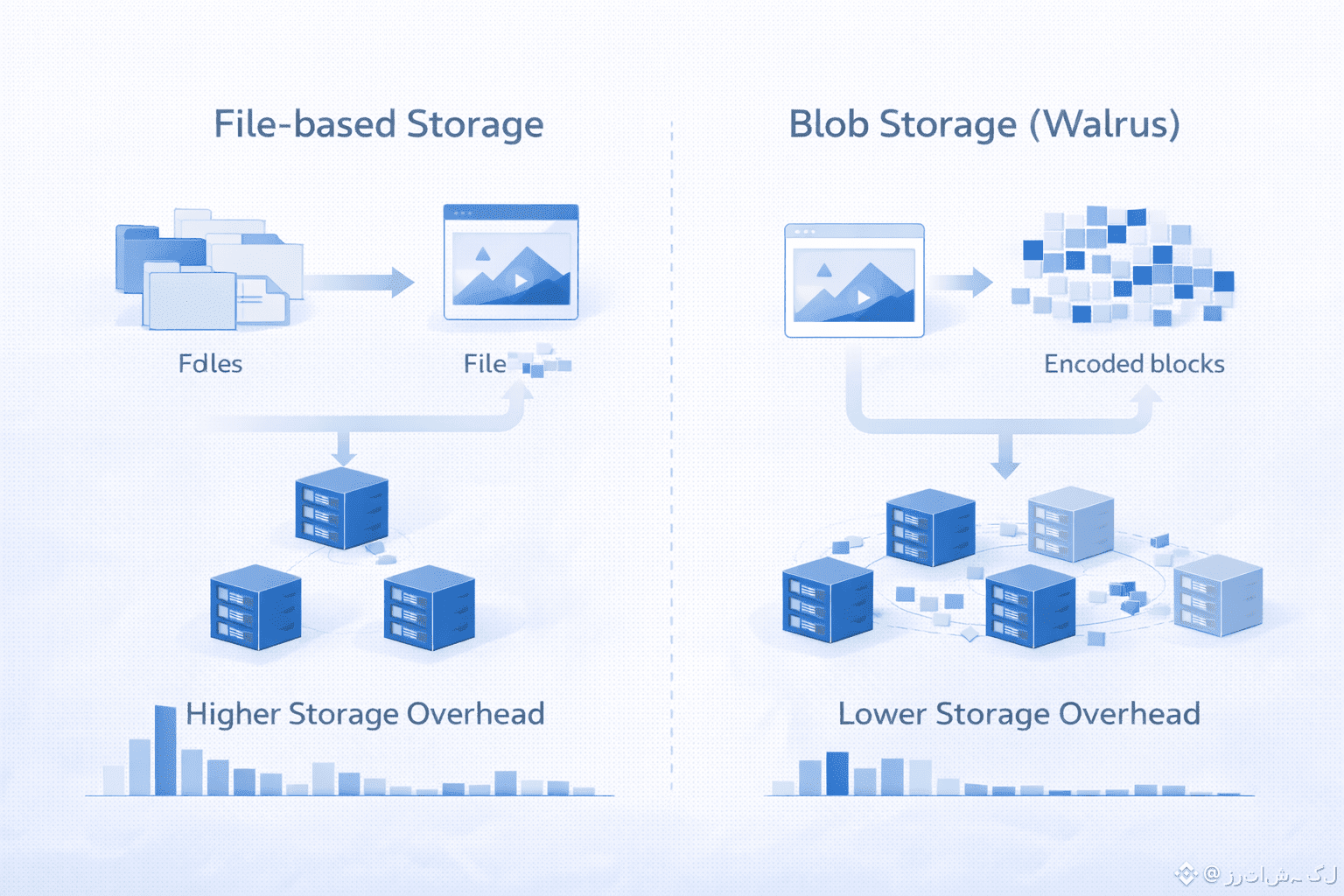

In many existing storage systems, reliability is achieved by replication. The same data is copied again and again and placed on different machines. This works, but it is expensive. Every extra copy means more disk space, more cost, and more coordination. For small files this might not matter much, but for large blobs it quickly becomes a serious problem. If a network is meant to store huge amounts of data for many years, the cost of replication grows faster than people expect.

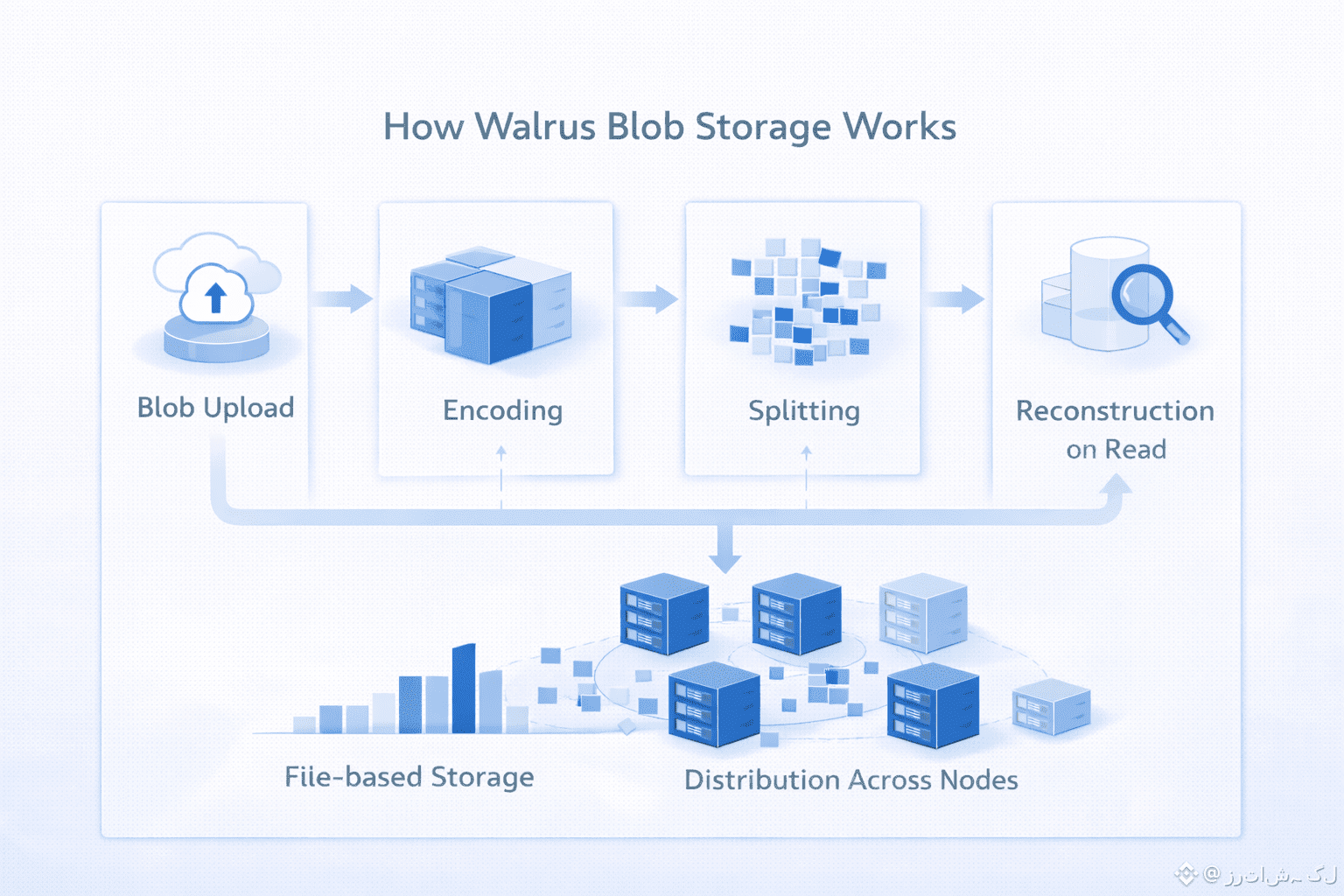

Walrus takes a different path. When a blob is uploaded, it is not simply copied. It is encoded and split into many pieces. These pieces are then spread across the network. No single node has the full blob, but the system is designed so that the original data can be reconstructed from the pieces that remain available. If some nodes go offline or disappear, the blob does not vanish. It can still be rebuilt from what is left, and the network can repair itself by creating new pieces to replace the missing ones.

This is one of the main reasons Walrus focuses on blobs instead of files. The system does not need to know what the data represents. It only needs to guarantee that the blob, as a whole, remains recoverable. This makes the design simpler and more honest. There is no illusion that the network is a traditional file system. It is a data availability and storage layer, optimized for large, opaque objects.

Another important point is how this fits into the real world of decentralized applications. Rollups, for example, need to publish large amounts of data so that anyone can verify what happened. NFT platforms need to store images, videos, and other media that should not disappear when a company shuts down. Social platforms, games, and AI systems all produce data that is big, heavy, and not well suited to be stored directly on a blockchain. In all of these cases, what they really need is blob storage, not a file browser.

By designing everything around blobs, Walrus can also be more precise about its guarantees. It is not promising that your data will always be easy to browse or neatly organized. It is promising something more fundamental: that once a blob is accepted by the system, it will remain available and reconstructible, even as nodes come and go, and even as the network changes over time.

This focus also changes how you think about reading data. When someone wants to retrieve a blob, the system does not look for a single machine that has the file. It gathers enough pieces from different places and rebuilds the original data. This may sound complex, but it is actually a very natural fit for a decentralized environment, where you should never assume that any single machine will always be there.

In a way, Walrus treats storage less like a library and more like a living system. Data is not placed on a shelf and left there forever. It is actively maintained, checked, and repaired. The blob stays the same, but the pieces that make it up can change over time as the network adapts.

This is why the idea of blob storage is not just a technical detail in Walrus. It is the center of the whole design. By admitting that most modern decentralized applications deal with large, simple chunks of data, and by building a system that is honest about that, Walrus avoids many of the problems that come from trying to imitate traditional storage in a world where the assumptions are completely different.

Instead of pretending to be a decentralized hard drive, Walrus becomes something more focused and more realistic: a network that is good at one job, which is keeping blobs available, even when the environment is unpredictable and sometimes hostile. In the long run, that kind of clarity is often more valuable than trying to do everything at once.