I didn’t really understand why people kept saying Pixels was becoming infrastructure.

From the outside, it still looks like a farming game. You log in, you complete loops, you earn, you move on. Nothing about that immediately signals this is something other studios would build on top of.

But the more I sat with the Stacked announcement, the less it felt like a product expansion and the more it started to feel like a release of something that had already been forming inside the game for a long time.

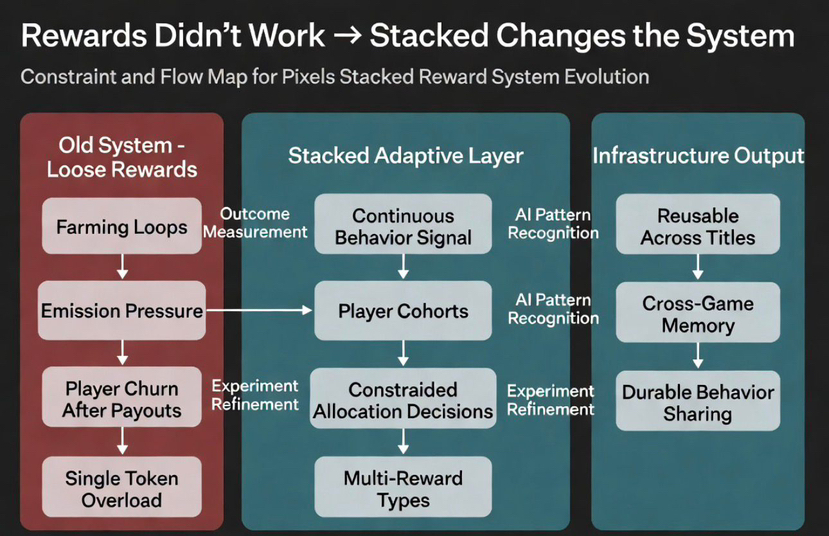

What I missed at first is simple: this isn’t a reward system. It’s an allocation system.

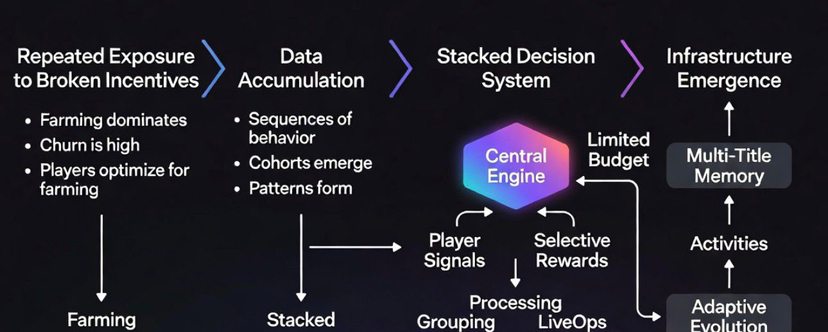

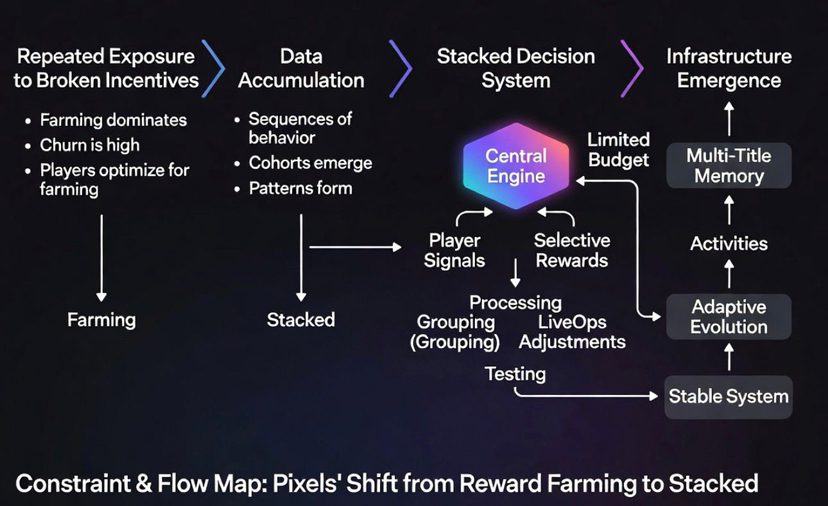

What changed for me was realizing that the most valuable thing Pixels built wasn’t the world, or the assets, or even the gameplay loop. It was the repeated exposure to how players behave under incentives that don’t hold up.

Most teams don’t get that far. They design a system, launch it, see early traction, and then move on before the cracks become visible. Pixels didn’t really have that luxury. It kept running the same core loop long enough to see what happens when rewards are too loose, when farming behavior dominates, when players show up for payouts but don’t stay for anything else. That kind of feedback doesn’t show up in dashboards immediately. It only becomes clear when the system has been stressed over time.

And once you’ve seen that cycle play out a few times, it changes what you build next.

That’s where Stacked starts to make sense.

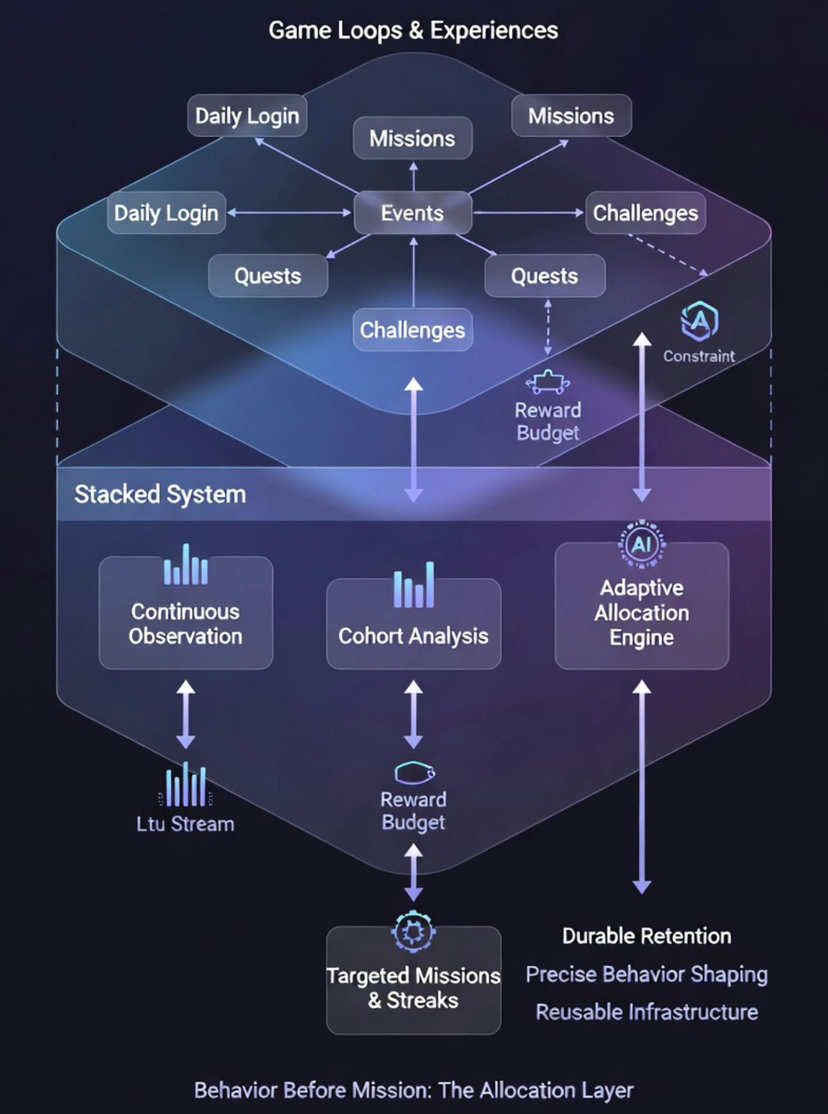

At first glance, it looks like a rewards layer. Missions, streaks, payouts, a single app that connects multiple experiences. If you stop there, it’s easy to assume this is just a better quest board or a more organized way to distribute incentives.

But that framing is wrong. This is not a quest system. This is a capital allocation engine for player behavior.

The way it’s described and more importantly, the way it must be operating underneath suggests something closer to a decision system sitting above the game loops rather than inside them.

The starting point isn’t the mission anymore. It’s the behavior that happens before the mission even exists.

Over time, the game has already collected enough signal to distinguish between different types of players. Not just based on how active they are, but based on how they react when incentives change. Some players continue engaging even when rewards are reduced. Some only show up when payouts spike. Some move across different parts of the game and build longer-term patterns. Others extract value from a single loop and disappear.

Those differences matter more than activity itself.

Once you accept that, the role of rewards changes.

They stop being something you attach to actions and start becoming something you allocate based on expectation.

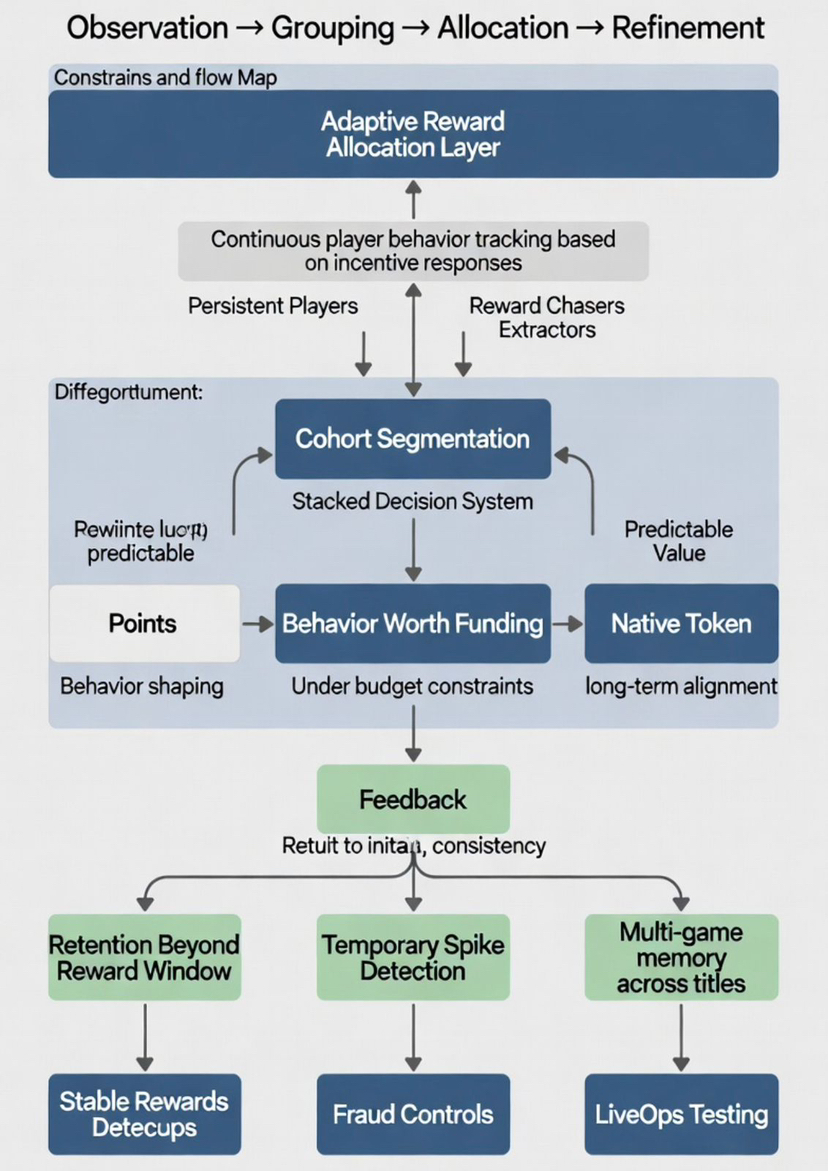

That’s the anchor: behavior → segmentation → allocation → feedback → repeat.

That’s where the internal flow of Stacked becomes important, even if it’s not explicitly presented that way.

Player behavior gets tracked continuously, not just as isolated events but as sequences over time. Those sequences get compared, grouped, and refined into cohorts that behave in similar ways under similar conditions. That segmentation is not the end of the process; it’s the input into the next decision.

The system has to decide which of those behaviors is actually worth funding.

In practice, that implies something closer to continuous experimentation under constraint multi armed allocation across cohorts, where reward spend is dynamically shifted toward behaviors that maximize retention, depth, or cross-loop engagement.

That’s where most designs fall apart, because they never really operate under constraint. It’s easy to reward everything when the goal is growth. It becomes much harder when rewards are treated as budget rather than emission.

If one group is being incentivized, another group is not. If one type of activity is being reinforced, another is being ignored. Those decisions don’t just affect short-term engagement; they shape how the entire system evolves over time.

Every reward becomes a bet. Every allocation competes for the same finite budget.

And that’s exactly where this stops feeling like a game mechanic and starts feeling like infrastructure.

Because once you have a layer that continuously observes behavior, groups it, tests responses, and reallocates rewards based on outcomes, you’re no longer designing static loops. You’re running an adaptive system.

The LiveOps framing in the announcement is important here, but only if you read it beyond the surface. Targeting, fraud controls, testing, attribution these are not just features to improve efficiency. They are components of a feedback system that determines whether reward spend is actually producing something durable.

If a mission increases retention beyond the reward window, it gets reinforced. If it only generates temporary spikes, it gets adjusted or removed. If a cohort behaves differently than expected, the system isolates it and learns from it. Over time, those adjustments accumulate into something that looks stable from the outside but is constantly shifting underneath.

This is effectively a closed-loop optimization system: observe → allocate → measure → update.

That’s where the AI layer actually fits in, and it’s different from how most projects use it. It’s not there to generate content or to enhance gameplay directly. It’s there to help process the volume of decisions that come from running multiple experiments across different cohorts and reward types at the same time. At that scale, the system needs pattern recognition that goes beyond manual tuning.

So the intelligence sits in allocation, not presentation.

And once that layer is in place, other parts of the design naturally start to change.

The multi-reward direction is one of them. Forcing a single token to handle every role incentive, payout, speculation, alignment creates pressure that compounds over time. When all behaviors map to the same output, the system loses precision. High value and low value actions become indistinguishable at the reward level.

Separating reward types allows the system to be more selective. Points can be used to shape behavior without immediate liquidity impact. Stable rewards can provide predictable value where necessary. The native token can shift away from constant emission toward a role that reflects longer term participation and positioning within the ecosystem.

In other words: different behaviors require different currencies, or the system collapses into noise.

That shift only works if the allocation layer is already functioning.

Otherwise, it just fragments the economy.

But here, the allocation is the core.

And that’s also why the rollout is controlled.

From the outside, it might look like a cautious launch. Internally, it’s a necessity. Systems that make decisions at this level amplify both success and failure. If the logic is wrong, scaling it quickly just spreads the mistake across more users and more environments.

Starting with internal titles changes that dynamic.

Pixels already understands the loops inside its own games. It knows where incentives leak, where players churn, where activity looks healthy but doesn’t translate into anything meaningful. That context allows the system to be tested in conditions where the variables are known.

As more titles get connected, the system starts to build something more interesting.

Memory.

Not just of actions, but of behavior across contexts.

A player’s pattern in one game can influence how they are treated in another. The system is no longer isolated to a single loop. It’s learning how individuals respond to incentives across different environments, and using that to refine its decisions.

That’s where it becomes reusable.

Not because it offers better rewards, but because it offers a way to decide what should be rewarded at all.

Most systems distribute incentives. This one filters behavior.

And that’s a different kind of product.

It’s slower to build, harder to get right, and less obvious from the outside. But once it works, it changes the role of everything built on top of it.

The game becomes one environment among many.

The system becomes the constant.

And the real output is no longer missions or rewards.

It’s the ability to shape behavior in a way that holds up over time.

If this works, the advantage isn’t content. It’s control over incentive flow.

That’s why this doesn’t feel like Pixels expanding into infrastructure.

It feels like it finally reached a point where the system it had been running internally is stable enough to be exposed.

Not as a promise.

But as something that already had to survive real use.