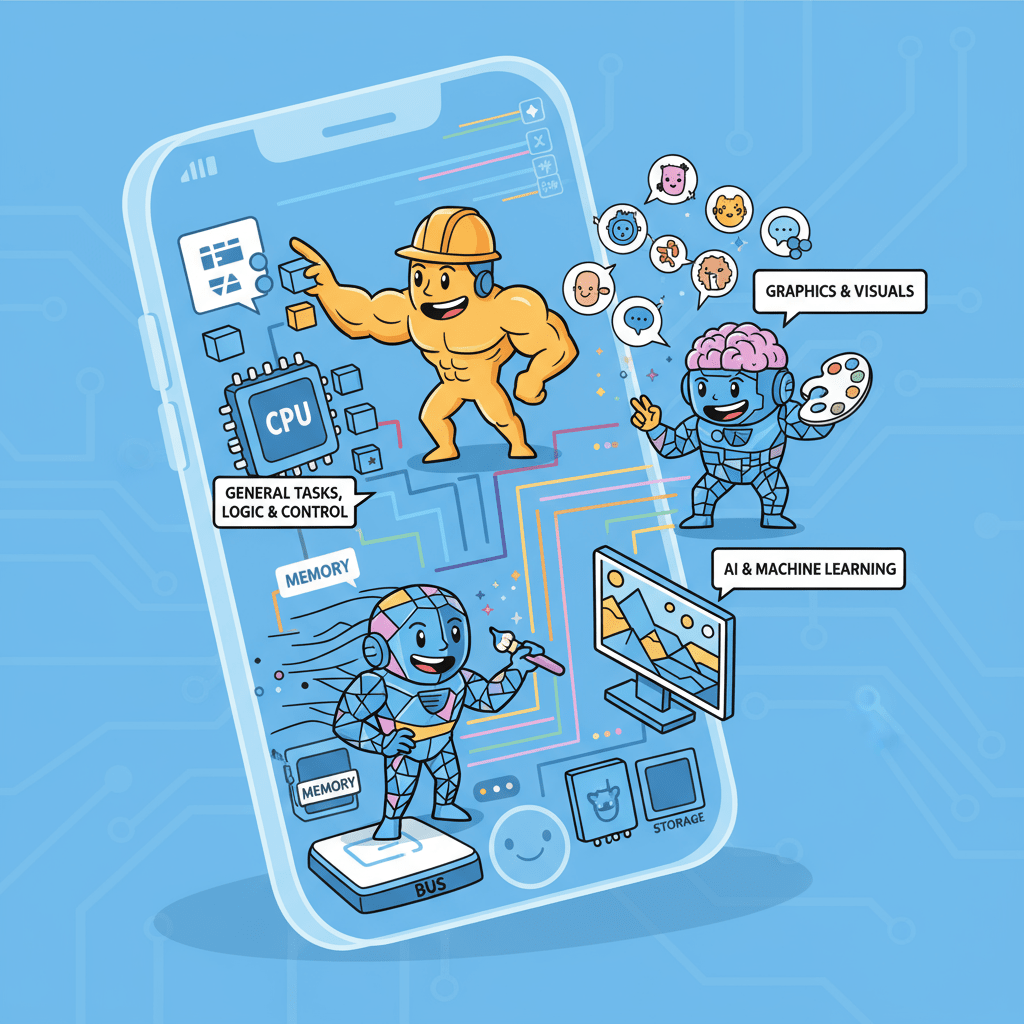

The smartphone in your hand can play music, smoothly scroll through short videos, and download updates in the background. Why? Because it does not have only one processor working hard. It has a bunch of 'experts' each doing their job: the CPU coordinates everything, the GPU specifically handles images, and the NPU runs artificial intelligence algorithms. This 'division of labor' model is the foundation of all modern high-performance computing.

Now we look back at blockchain. Early blockchains, like Bitcoin and Ethereum, resembled an old single-core computer. They are reliable and secure, but require all nodes in the network to complete the same task from start to finish to reach consensus. This is like a company where everyone, from the CEO to the receptionist, must personally complete all the accounts to confirm the final profit. It's secure enough, but the inefficiency is outrageous.

Later, we welcomed the wave of 'modularity,' which was a significant advancement in the industry. We began to understand 'division of labor,' separating execution, data availability, and settlement tasks to be handled by different layers. This is akin to a company establishing dedicated sales, finance, and administration departments, which indeed improves efficiency significantly. Layer2 is a product of this thinking.

But a new problem arises. Even in the Rollup layer, which is specifically responsible for 'execution,' it still resembles a 'general practitioner' more than a 'specialist.' It can handle various transactions, but when it encounters a 'difficult problem' that requires an extremely large amount of computation, such as updating the physical state of an entire game world or conducting a complex risk simulation based on massive market data, it will still struggle. The entire system will be slowed down by this one task, and gas fees will instantaneously soar.

At this point, we need to think about the next step: since the 'general practitioner' is overwhelmed, why not equip it with a 'top expert advisory team'?

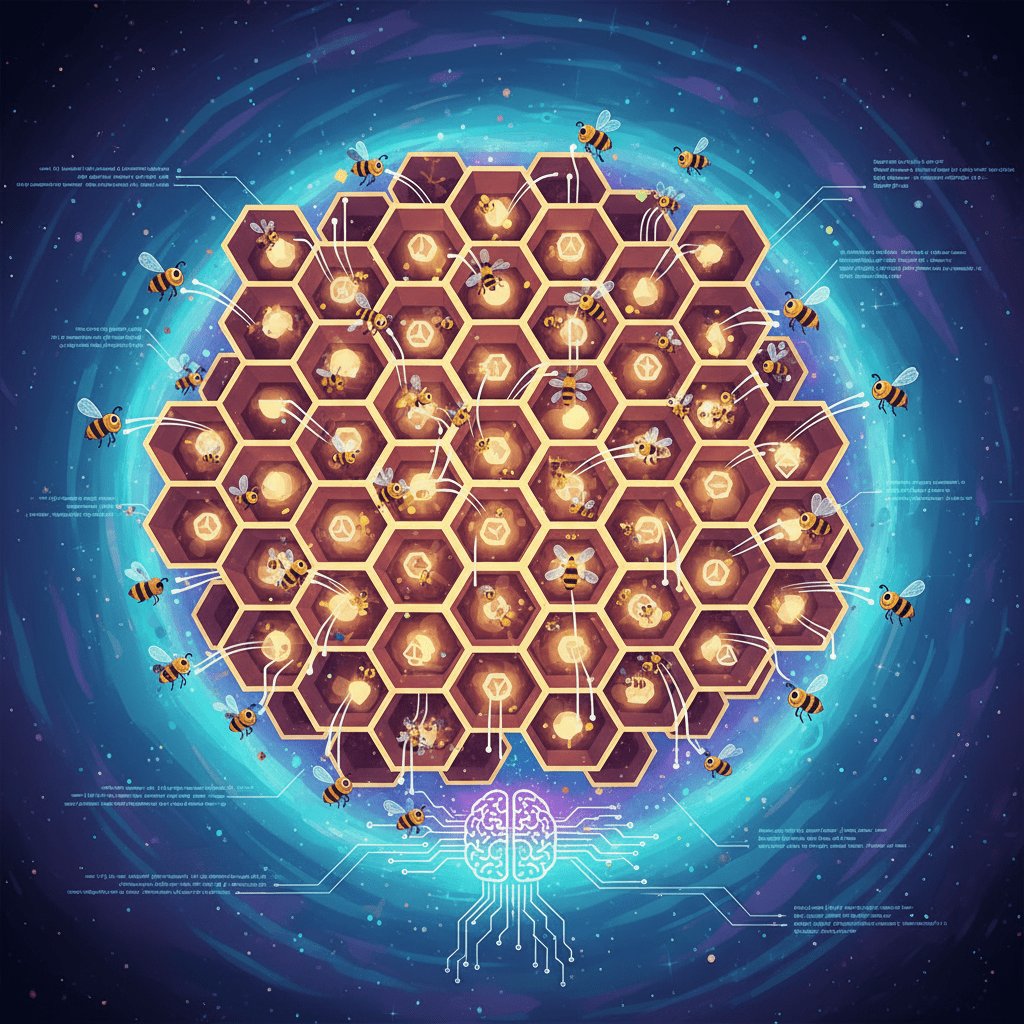

This is exactly what Lagrange is doing. It does not seek to replace the existing L1 or L2 but chooses to become an 'external super computing expert' for them, a 'ZK co-processor' that can be called upon as needed.

Its logic is straightforward:

When a dApp needs to handle a highly complex computation task on-chain, the main chain (whether L1 or L2) does not force itself to compute. It will 'outsource' this task to Lagrange.

After receiving the task, Lagrange immediately leverages its 'expert' specialty. It will instantly break down this large task into thousands of smaller tasks, and then utilize its vast decentralized node network to allow countless nodes to begin parallel computations simultaneously, like a supercomputer with countless cores.

After the computation is completed, Lagrange uses zero-knowledge proof technology to package all these computation processes and results into an unforgeable and extremely concise 'proof.'

Finally, it hands this lightweight 'proof' back to the main chain. The smart contracts on the main chain only need to put in a little effort to check that this proof is valid, and they can fully trust this complex computation result.

You see, throughout the entire process, the main chain, this 'general practitioner,' is not overwhelmed. It simply made a smart decision: to delegate specialized tasks to professional 'people.' It is only responsible for the final review and confirmation.

This is actually a more mature and efficient system architecture. It acknowledges that 'each profession has its own expertise.' The core value of the blockchain main chain lies in ensuring security and consensus, rather than performing intensive computations. Lagrange focuses on providing verifiable, large-scale computing power. Only by combining the two can we achieve both 'decentralized trust' and 'Web2-level performance.'

This enables us to build applications that we previously wouldn't have dared to imagine, not just minor tweaks to existing dApps, but truly creating new species.