We all know that blockchain is like a meticulous but somewhat slow accountant; it excels at recording accounts (transactions) one by one, in order, and ensuring that each entry is flawless. This 'serial processing' model guarantees security and consensus but has also become a shackle on performance. When you need it to handle thousands of associated computations simultaneously, such as analyzing the risk exposure of all users in an entire DeFi protocol or updating the status of each unit in a large strategy game in real-time, it will tell you: 'Sorry, please queue up, one at a time.'

To address this issue, the mainstream solution in the industry is Layer2, which is equivalent to hiring a nimble assistant for the accountant, helping him preprocess and package documents, and finally having the accountant record them uniformly. This improves efficiency but does not change the accountant's core working model of 'serial processing.' For those tasks that essentially require 'parallel processing,' such as computations involving massive data points, it remains inadequate.

This is where Lagrange comes in. It does not intend to transform the accountant himself, nor does it merely serve as an assistant. Lagrange is positioned as a 'super computing cluster' specifically equipped for blockchain main chains (whether L1 or L2), a 'ZK co-processor' capable of performing large-scale parallel computations.

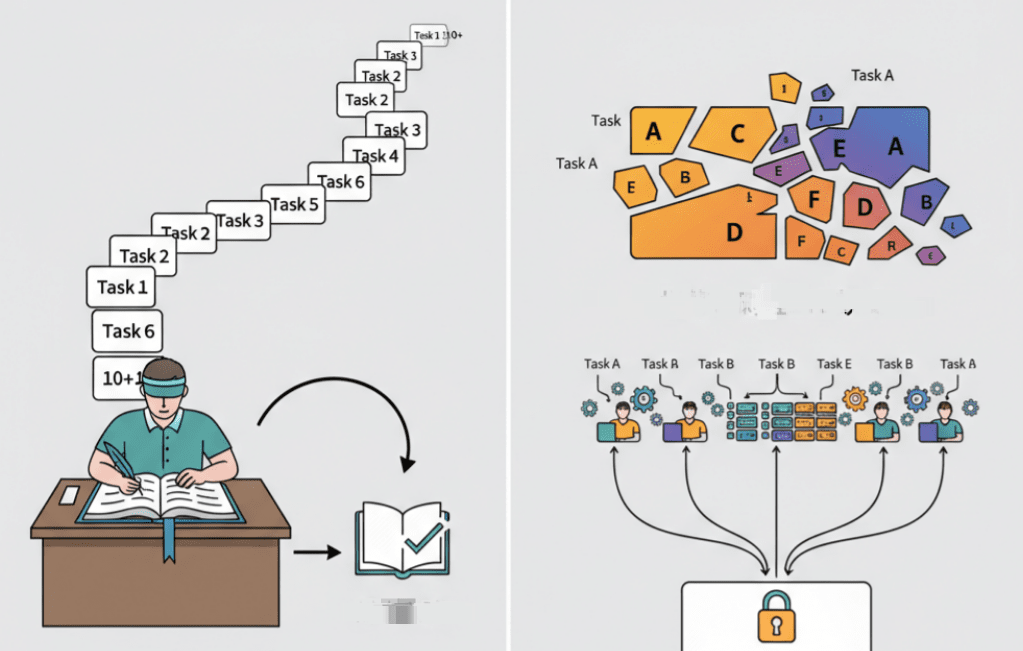

Its core mechanism can be understood as two key steps: task decomposition and parallel processing, as well as result aggregation and trustworthy proof.

First, when a dApp throws out an extremely complex computation request, such as 'please calculate the new positions of all player units for the next second based on this 1TB of game world state data,' Lagrange does not attempt to have a single node complete the entire task. Instead, it utilizes its dynamic 'State Committees' architecture. You can think of this as a smart task scheduling center that immediately splits this 1TB of data and computation tasks into thousands of small pieces, then dynamically and on-demand assembles hundreds or thousands of small node committees, each responsible for handling a small piece of the computation.

This 'divide and conquer' approach to parallel processing is the cornerstone of modern high-performance computing. It transforms a large task that would originally take several days on a single-core CPU into a task that can be completed in seconds by a multi-core GPU cluster. This enables Lagrange to handle data scales and computational intensities that traditional blockchain architectures cannot even imagine.

Secondly, and most critically, after all the small committees have completed their computing tasks, Lagrange will use zero-knowledge proof technology (especially cryptographic schemes similar to ZK-MapReduce) to 'compress' these thousands of scattered computation results, along with their computation processes, into a single, extremely concise ZK proof.

This final proof is like a perfect audit summary report. It proves to the main chain that: I have indeed received the initial 1TB of data, and I faithfully and in parallel executed all specified calculations, ultimately obtaining this result, with the entire process being flawless.

Smart contracts on the main chain no longer need to worry about how complex the intermediate processes are or how many committees are involved in the calculations. They only need to incur very low gas costs to verify the validity of this final proof. Once verified, it is equivalent to acknowledging the final authority of the result of this complex computation.

In this way, Lagrange opens a door to large-scale computation for Web3. It allows developers, when conceptualizing applications, to break free from the constraints of on-chain performance. Whether it is a DeFi derivative protocol that requires real-time analysis of massive on-chain data, a full-chain game that aims to build a persistent and complex physical world, or the desire to deploy and run verifiable AI models on-chain, the verifiable parallel computing capability provided by Lagrange will become their most solid underlying infrastructure.

This represents a paradigm shift that fundamentally enhances the upper limits of on-chain application capabilities, allowing blockchain to truly evolve from a 'global ledger' into a 'global trusted computing platform.'