In many people's impressions, Lagrange is synonymous with 'cross-chain verification' and 'ZK light clients'. This label is not wrong, but stopping here underestimates its leap in engineering and product over the past year: one line is ZK Coprocessor (moving heavy computation off-chain and validating results on-chain), another line is Lagrange State Committees (LSC) (a ZK light client network based on Ethereum re-staking), and the third line is DeepProve zkML (turning AI reasoning into verifiable encrypted artifacts).

The three lines together cover the complete pathway of 'compute — prove — transmit': On-chain applications send problems to the Coprocessor for computation, LSC is responsible for proving cross-chain states and finality, zkML turns AI reasoning into verifiable evidence, and the contract side completes final settlement and triggering. The official positioning of these three components is already very straightforward: ZK Coprocessor 1.0 can directly verify custom SQL queries in contracts; LSC, as a decentralized operational light client network, can scale with protocol size and layer EigenLayer security; the homepage also provides real-time cumulative metrics for DeepProve (proof volume for reasoning, number of generated ZK proofs, number of independent users, and number of integrated projects). These are primary materials for understanding 'boundaries'.

1. ZK Coprocessor: Allowing contracts to 'ask history', no longer choked by gas and storage.

The design goal of the Coprocessor is very straightforward: allowing contracts to perform complex queries and aggregations on massive historical states at an affordable cost, and then verifying the results on-chain using ZK proofs. The official engineering notes from version 1.0 and the Euclid Testnet repeatedly emphasize two points: first, SQL semantics serve as a developer-facing shell, while the underlying computation is broken down into recursive circuits for hyper-parallel distributed proofs; second, the proof network is horizontally scalable, allowing for larger-scale computations at the same cost by slicing tasks across multiple machines in parallel. For dApps that need to perform 'historical attribution, KYC/compliance penetration, path reconstruction, address profiling, and cross-chain asset aggregation', this is equivalent to moving tasks originally performed by 'off-chain black box services' into a self-verifiable encrypted paradigm. Businesswire's financing documents also clearly state, 'Breaking large tasks into parallel processing across multiple machines allows proof performance and scale to reach levels that traditional paths find hard to achieve.'

2. LSC: Not the 'k-of-n bridge' model, but an extensible light client network.

The positioning of LSC is to provide an optimistic Rollup with a ZK light client path based on re-staking: each committee consists of a group of operators re-staking 32 ETH on EigenLayer, essentially forming an AVS (Actively Validated Service); they provide consistent witness and state proof for the finality of the target Rollup. Unlike isolated 'k-of-n' bridges, LSC allows the number of nodes to expand without limits as the ecosystem grows, and the security collateral grows super-linearly, transforming 'more protocols sharing the same state committee' into a shared security zone. Lagrange's official articles, collaborations with Arbitrum, and Coinbase developer guidelines all provide the same structural description and operational caliber. For developers, the result is that reading cross-chain states can be done faster and more steadily without introducing additional trust.

3. From 'cross-chain messages' to 'cross-ecosystem data': The application scope of LSC is not just bridging.

LSC is often regarded by many as the infrastructure for 'cross-chain messages', but it is actually closer to a 'cross-ecosystem data layer'. In the official technical articles addressing Arbitrum and Mantle, the workflow is broken down in detail: once the DA layer confirms the transaction batch as final, the committee will provide finality witness for the corresponding block and generate a state proof that can be externally consumed; downstream applications can use this to complete verification and invocation on the target chain. This pathway has one less layer of implicit trust compared to traditional bridges, making it suitable for use cases such as cross-chain clearing, cross-chain yield distribution, and cross-chain governance snapshots where 'data is king'.

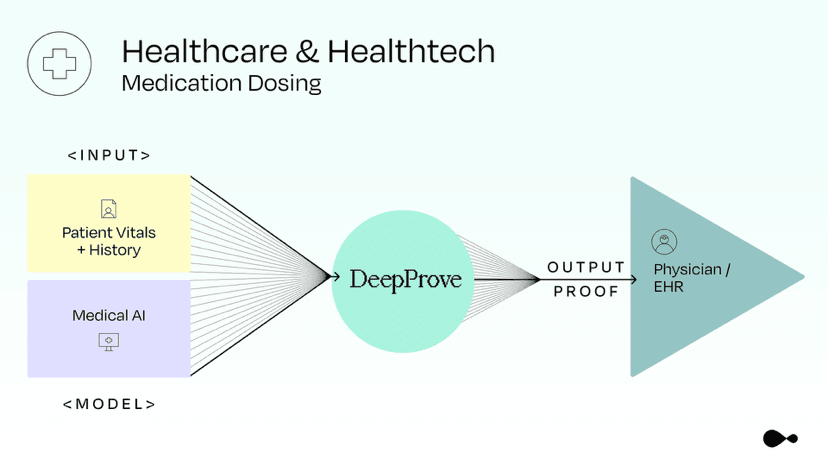

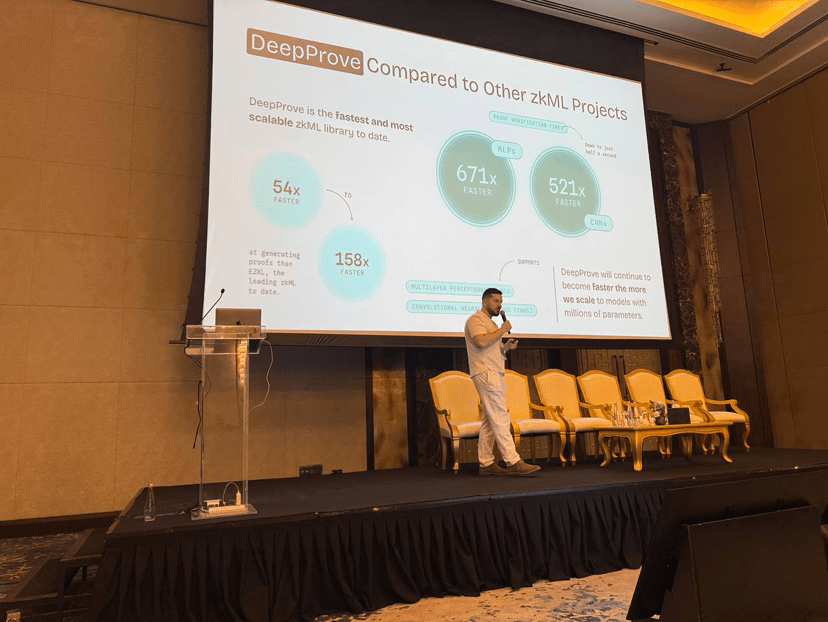

4. DeepProve: Turning AI reasoning into verifiable artifacts.

Over the past year, Lagrange has pushed zkML from papers and experiments to a 'visible' milestone. The engineering update in July recorded an important node: completing the full-process proof for GPT-2 reasoning (building upon previous support for MLP and CNN, adding Transformer-level reasoning into the proof paradigm). The homepage of the official website also presents a set of visual metrics: 3 million+ AI reasoning proofs, 11 million+ generated ZK proofs, 140,000+ independent users, and 30+ integrated AI projects. This means zkML is no longer just 'narrative', but has entered a stage of 'being used, being integrated, and being measurable'.

5. Integration with chip and cloud sides: closer computation, lower latency.

Collaboration around zkML is also advancing. CMC's 'latest updates' channel and community have mentioned Lagrange's cooperation with Intel multiple times (connecting DeepProve with Intel's AI cloud facilities for scalable, privacy-friendly reasoning verification), along with a timeline for early August; also, the mid-July entry noted 'completion of OpenZeppelin audit fixes'. This information helps understand its direction of 'bringing proof systems closer to mainstream computing platforms'. Of course, for technical details and SLAs involving vendors, it is best to rely on the official engineering logs and collaboration announcements released continuously. When writing, 'community and aggregation channels disclosed, awaiting official details' can be used to mitigate risks.

6. Linking the three lines together is essential to explain why it 'behaves like a network rather than a library'.

Coprocessor addresses the issue of 'what to compute and how to compute', LSC solves the problem of 'how to obtain evidence across ecosystems', and zkML addresses 'how AI can self-verify'. The three point towards a reality: applications can perform complex calculations on historical data, provide encrypted proof for external reasoning, read states from external ecosystems, and string these results into an automatically executable workflow without introducing additional trust parties. For use cases such as salary distribution for RWA, cross-chain re-staking yield distribution, risk control and clearing robots, model outsourcing, and review, this 'compute — prove — transmit' pathway means replacing previous black-box outsourcing with a 'chain verifiable + off-chain high-performance' composable system.

7. Using side evidence: Financing and ecological connections are 'hard indicators'.

In addition to engineering logs, financing and ecological channels can also reflect 'how much it is being used'. In May 2024, Lagrange announced the completion of a $13.2 million seed round, and the financing document listed 'horizontal parallelism, distributed proof, and targeting big data workloads' as investment logic; research and tutorial articles from Binance Research and Binance Academy also identified 'SQL-type Coprocessor', 'cross-chain verifiable computation', and 'Prover Network' as key keywords for understanding the network. For those needing to explain externally, these materials serve the dual purpose of 'external endorsement + technical details'.

8. An 'observation table' for developers and product managers: Don't just look at TPS.

To determine whether this system truly 'behaves like a network', it is advisable to monitor three curves monthly:

1) Performance on the proving side: average proof latency, recursive aggregation depth, cross-machine distributed efficiency; can combine engineering logs to observe differences before and after key versions.

2) Cross-ecosystem reading: the list of target chains supported by LSC, new integrations, daily state query success rates, and retry ratios; cross-verify with the LSC introduction and collaboration cases.

3) zkML usage: DeepProve's proof volume for reasoning, independent users, number of integrated projects, combined with the timing supported by the new model family.

9. Risks and boundaries.

— External dependencies: Changes in upstream components like re-staking, DA, and ordering will migrate to the operational boundaries of LSC; 'failure mode rehearsals' must be conducted before going live.

— Proving cost and economy: Large-scale tasks need to be economically viable. It is recommended to introduce a split strategy of 'offline pre-calculation + online rapid verification' and to cache proof reuse.

— Ecological consistency: Inconsistencies in finality and rollback semantics across different target chains require corresponding safety buffers and observation periods for cross-chain state consumption.

10. Summary.

Treating the three product lines as a whole, Lagrange's 'network sense' will become apparent: Coprocessor provides computation and data, LSC provides verifiability across ecosystems, and zkML provides AI's self-verification capability. When you write applications on this pipeline, many previous stages that relied on 'trusted services' can be replaced by a 'proof + verification' procedural flow. This is also the fundamental reason it can continue to occupy developers' minds in the second half of 2025.

@Lagrange Official #lagrange $LA