$ROBO #ROBO @Fabric Foundation

I didn’t start looking into @Fabric Foundation because I wanted another robotics story.

Honestly, we already hear enough about automation, AI agents, and the future of machines. Every narrative sounds familiar smarter robots, faster models, autonomous systems replacing human tasks. But the more I followed that conversation, the more something felt incomplete.

We talk endlessly about what machines can do.

Almost nobody talks about how we verify what they actually did.

And that gap becomes serious the moment machines move from software experiments into real-world environments logistics, mobility, manufacturing, or autonomous infrastructure. When a robot acts in the physical world, trust cannot rely on a private server log or a centralized dashboard. The consequences become economic, operational, and sometimes even safety-critical.

That shift is what made Fabric interesting to me.

The real problem isn’t intelligence, it’s accountability

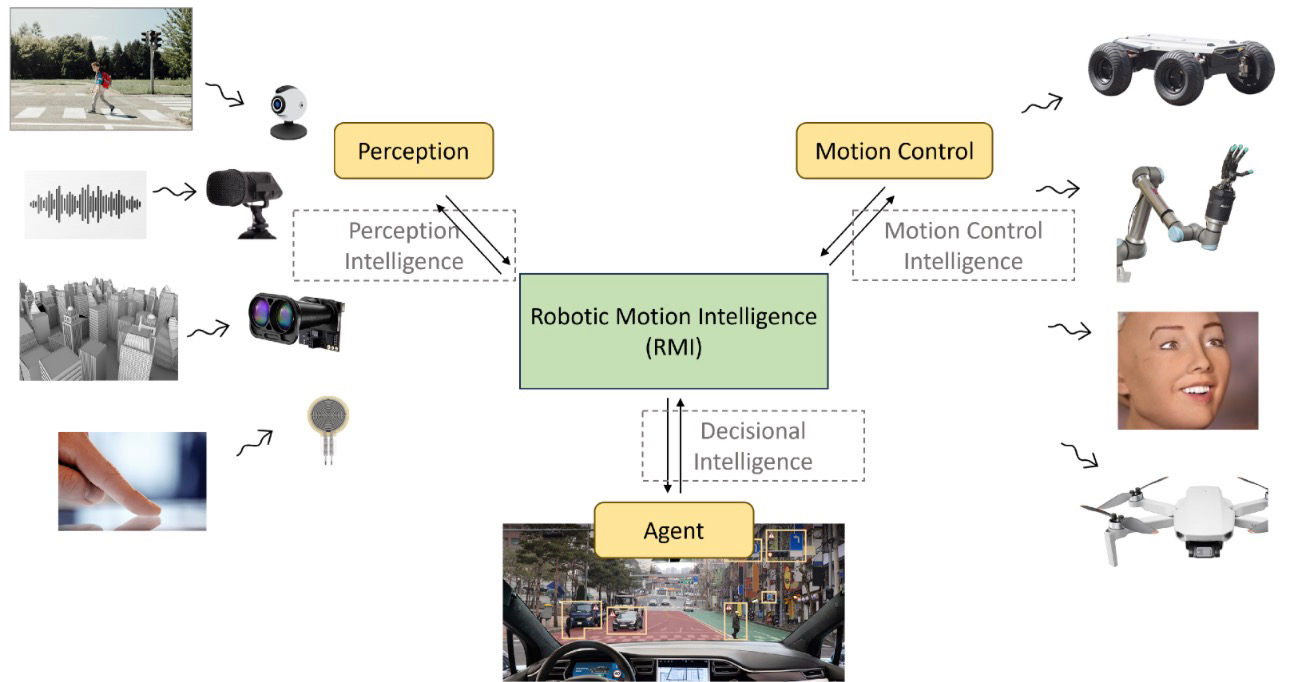

Most projects focus on building better robots. Better sensors. Better autonomy. Better decision-making.

But imagine a world where machines already work efficiently. The next question isn’t how smart they are. It’s who verifies their actions.

If a robot updates its behavior, who confirms that change?

If an autonomous system completes a task, who proves it actually happened?

If millions of machines begin transacting value, who ensures coordination isn’t manipulated?

Right now, that responsibility lives inside private infrastructure. Companies verify their own machines. Logs remain internal. Data is controlled by whoever owns the system.

That model works for experimentation. It doesn’t scale well for open economic systems.

Fabric approaches the problem from a different angle: instead of only improving machines, it focuses on building shared verification.

Shared truth instead of private trust

Shared truth instead of private trust

What stood out to me is how Fabric treats verification as infrastructure rather than an add-on.

The idea sounds simple: actions, computation, and system updates are anchored to a public, verifiable environment. Not for hype, for proof.

If a machine performs a task, the result can be audited.

If computation changes, it becomes visible.

If coordination happens across systems, records exist beyond a single organization.

That sounds small at first, but it completely changes how autonomous systems can exist economically.

Because once machines operate in open environments, trust needs to move from institutions to mechanisms.

Machines acting vs humans signing

Most blockchain systems were built around human assumptions:

Humans hold wallets

Humans approve transactions

Humans sign intent

Fabric flips that mental model.

It assumes that machines themselves might participate in coordination and economic flows. This is what people call agent-native infrastructure, but the practical idea is simple: systems designed for machine participation from the start.

Instead of forcing automation into human-centric rails, Fabric explores what happens when machines:

interact economically,

verify outcomes,

and coordinate through transparent rules rather than centralized authority.

Whether adoption happens fast or slowly isn’t the key insight. The key insight is that this design anticipates a different type of participant entirely.

Verification as the long-term advantage

Automation usually gets framed as a race toward intelligence. But intelligence without accountability quickly becomes fragile.

As machine systems scale, trust becomes the bottleneck.

You can already see this pattern in AI: models are improving rapidly, yet debates around reliability, hallucination, and validation keep growing louder. Robotics will likely encounter a similar transition. The question won’t just be “Can it act?” but “Can we prove what happened?”

Fabric’s emphasis on verifiable computation addresses that pressure directly. Instead of assuming perfect behavior, it attempts to make results observable.

In practical terms, verification becomes the guardrail that allows autonomy to scale safely.

Why the economic layer matters

The other piece that caught my attention is the role of $ROBO

It’s easy to look at any token and assume it exists for speculation. But in this architecture, the intention feels closer to an operational layer coordinating incentives between builders, operators, and validators within the system.

If machines eventually participate in economic flows, there needs to be a way to align behavior:

work performed,

verification provided,

coordination maintained.

That’s where an economic layer begins to make sense not as hype, but as structure.

Of course, execution is everything. Infrastructure only matters if adoption follows. But conceptually, the direction feels coherent.

Open infrastructure changes the tone

Another detail that changes how I read the project is the foundation approach.

When infrastructure is built as open rails rather than closed corporate ownership, the long-term incentives shift. It becomes less about building the best private robotics platform and more about creating shared standards that different participants can rely on.

That doesn’t guarantee success nothing does but it changes the conversation from product competition to ecosystem design.

And that feels more aligned with where autonomous systems might need to go.

The bigger shift most people ignore

I don’t think Fabric is simply building robots.

It’s attempting to solve something quieter but arguably more important: how autonomy becomes accountable.

As machines move into real economic environments, verification stops being optional. Without shared proof, trust collapses back into centralised control and autonomy becomes an illusion.

If the future includes machines operating beside humans, the real infrastructure won’t just be intelligence.

It will be systems that prove what happened.

And that might be the part most people are still underestimating.