@Mira - Trust Layer of AI AI sounds confident even when it is wrong. That is the real danger. A system can give a smooth answer, use perfect grammar, and still share false information. In areas like finance, healthcare, or law, that kind of mistake is not small. It can cost money, safety, or trust. Mira Network was built to deal with this exact problem. It does not try to make AI more creative or faster. It tries to make AI prove what it says.

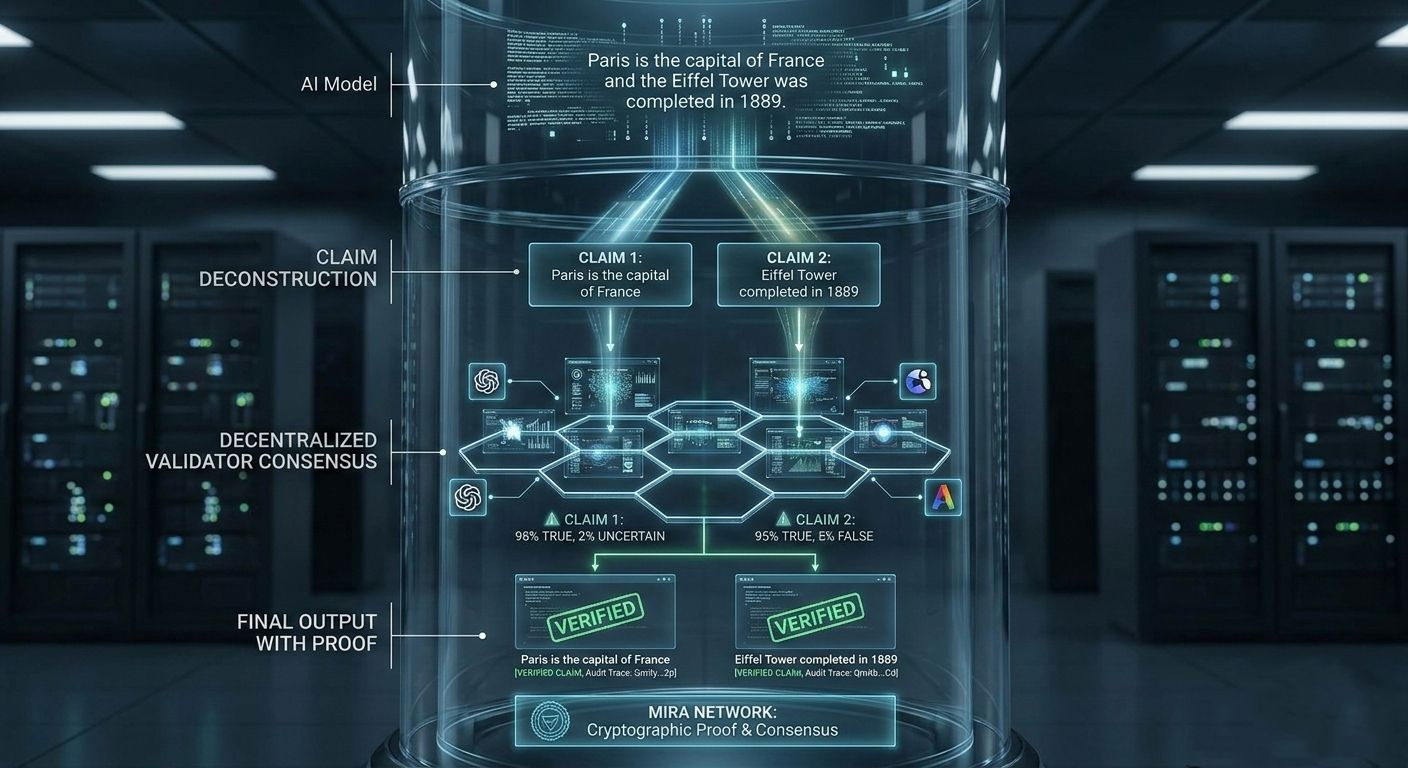

Mira Network works in a different way from most AI projects. It does not create a new chatbot. It does not compete with large language models. Instead, it acts like a verification layer. When an AI generates an answer, Mira breaks that answer into small factual statements. For example, if an AI says, “Paris is the capital of France and the Eiffel Tower was completed in 1889,” Mira separates those into two claims. Each claim is then checked on its own. This makes verification more accurate and more transparent.

After breaking the response into small claims, the system sends those claims to independent validators. These validators are separate nodes in the network. Each one runs its own AI model or verification system. They do not rely on one single source. Every validator checks the claim and gives a judgment. If most of them agree that the claim is true, it passes. If they disagree, the claim is marked as uncertain or false. This decision is recorded with cryptographic proof, which means it can be tracked and audited later.

The network uses staking to keep validators honest. Validators must lock MIRA tokens to participate. If they act honestly and their evaluations match the final consensus, they earn rewards. If they repeatedly give wrong or dishonest judgments, they can lose part of their stake. This creates a financial reason to verify carefully instead of guessing. Accuracy becomes profitable. Carelessness becomes expensive.

The MIRA token is not just for rewards. It is also used for governance and network participation. The total supply is limited, and tokens are distributed for ecosystem growth, validator incentives, and community development. People who do not want to run full validator nodes can still support the network by delegating tokens. This helps keep the system decentralized while allowing more people to participate.

One important part of Mira’s design is diversity. Different validators may use different AI models. This reduces the risk that one single model’s bias controls the final result. However, diversity is still a challenge. If too many validators rely on similar data sources, bias can still exist. Mira reduces risk, but it does not magically remove every problem in AI. It builds a structure that makes errors easier to detect and harder to hide.

Mira Network has also worked with decentralized GPU providers such as io.net and Aethir. These partnerships help provide the computing power needed for large-scale verification. Checking multiple claims across many validators requires strong infrastructure. Decentralized compute networks help distribute that workload.

Some AI applications are already integrating Mira’s verification layer. For example, platforms like Klok AI use verification systems to improve the reliability of responses before showing them to users. Instead of trusting a single model’s output, these platforms add an extra step to confirm accuracy. This approach is especially useful for research tools, financial analysis, and enterprise systems where mistakes can have serious impact.

There are still challenges. Verification takes time and computing resources. Not every casual conversation needs full decentralized consensus. For simple tasks, speed may matter more than perfect accuracy. But in high-risk environments, verified AI can make a major difference. The future may include hybrid systems, where important outputs are verified while low-risk answers are delivered instantly.

Regulation is another important factor. Governments are starting to pay close attention to AI systems, especially in Europe and other major markets. A verification layer like Mira can help companies meet compliance requirements. When every claim can be traced and audited, it becomes easier to show responsibility and transparency. This could make decentralized verification an important part of future AI standards.

At its core, Mira Network is about accountability. AI models will always be probabilistic. They predict likely answers based on data. That means mistakes will never fully disappear. Instead of chasing perfect intelligence, Mira focuses on structured checking. It accepts that AI can be wrong, and builds a system that challenges it every time it speaks.

If AI is going to run businesses, guide investments, or assist in medical advice, it cannot rely only on confidence. It needs proof. Mira Network represents a shift from blind trust in models to structured verification through distributed consensus. It turns AI answers into claims that must earn approval.

The real question is not whether AI can speak. It already can. The real question is whether AI can defend what it says. Mira’s entire mission is built around that idea. In a world where machines generate endless information, the systems that verify truth may become more important than the systems that create it.

@Mira - Trust Layer of AI #mira $MIRA