When I first started thinking seriously about AI reliability, I realized something uncomfortable: most of the time, we treat AI outputs as if they were finished products, not drafts. We ask a model a question, it gives a confident answer, and we move on. It feels efficient. It feels modern. But if I compare that to how decisions are handled in traditional systems—banking, law, infrastructure—the contrast is hard to ignore.

In finance, no serious institution settles large transactions based on a single internal note. There are reconciliations, counterparties, audits, clearing layers. In law, claims are tested through adversarial review. In engineering, systems are built with redundancy because failure is expected somewhere along the line. Reliability is never assumed; it is constructed.

That’s the lens through which I see Mira Network.

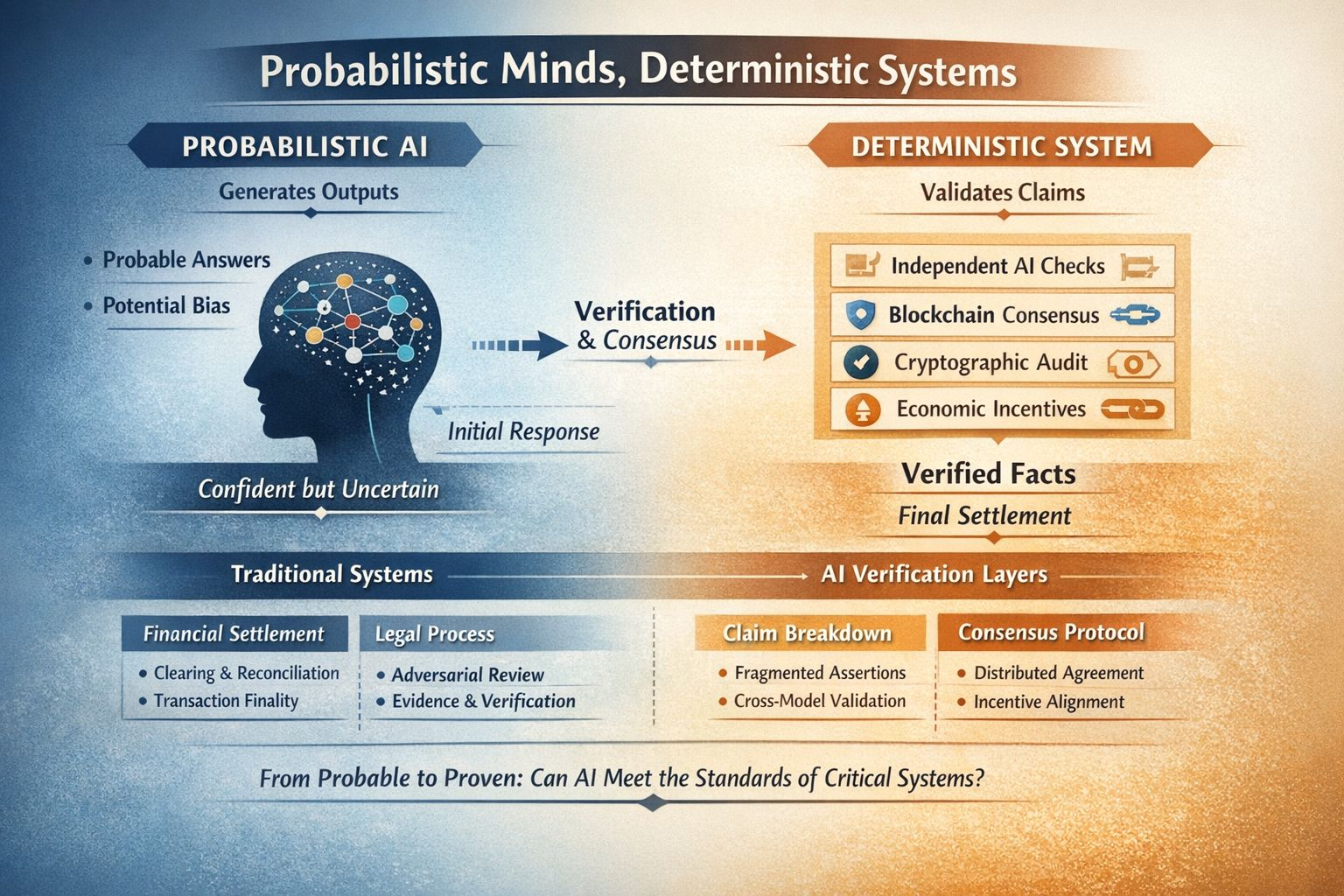

AI today is probabilistic by design. It predicts the most likely next word, the most plausible explanation, the most statistically consistent conclusion. Most of the time, that works remarkably well. But sometimes it produces something that sounds right and isn’t. The problem isn’t that it makes mistakes—humans do too. The problem is that it delivers mistakes with the same tone as truth. For low-stakes tasks, that’s manageable. For autonomous systems, compliance workflows, financial decisions, or anything binding, it becomes structural risk.

What interests me about Mira is not that it promises to “fix AI,” but that it reframes AI output as a claim rather than a conclusion. That shift feels subtle, but it changes the architecture. Instead of asking, “What did the model say?” the system asks, “Can this statement be verified?” That’s a very different mindset.

Breaking complex outputs into smaller, verifiable claims reminds me of accounting practices. An income statement isn’t accepted as a single sweeping declaration of profitability. It’s broken down into revenues, costs, assumptions, and adjustments. Each line can be examined. Mira applies a similar discipline to AI-generated information. Rather than treating a long answer as a single block of authority, it fragments it into parts that can be independently evaluated by other models in a decentralized network.

Of course, that introduces trade-offs. Verification takes time. Distributed evaluation consumes computational resources. Blockchain-based consensus adds overhead that a centralized API call does not. From a purely performance-driven perspective, this looks slower and heavier.

But I’ve learned that speed is often the least interesting metric in systems that matter. Settlement systems in finance are intentionally conservative because finality matters more than velocity. A payment network that processes instantly but occasionally reverses unpredictably would not survive. The same logic might apply to AI if it begins to operate autonomously in high-stakes environments. A fast incorrect answer is not necessarily superior to a slower, verifiable one.

The incentive structure is also worth thinking about carefully. In most centralized AI services, trust is placed in the provider. You assume the model was trained responsibly and monitored properly. Mira shifts that trust boundary. Instead of relying on a single institution’s internal controls, it distributes validation across independent participants who are economically incentivized to challenge and confirm each other’s outputs. Trust moves from brand reputation to mechanism design.

That doesn’t automatically make it better. Decentralization introduces coordination complexity. Incentives can be gamed. Verification costs can outweigh the value of the information being protected. Not every AI-generated sentence deserves cryptographic treatment. The key question is proportionality. Where does reliability justify cost?

I also think about the limits of verification. Factual claims can often be decomposed and checked. Interpretive or creative reasoning is harder. Real-world institutions deal with this by combining formal verification with human judgment. I suspect any robust AI verification system will face the same tension. Not everything can be reduced to discrete truth values without losing nuance.

What I appreciate most about this approach is that it focuses on something unglamorous but foundational: auditability. If AI systems are going to participate in financial settlements, regulatory reporting, governance execution, or automated contracts, then the ability to trace how an answer was validated becomes more important than how eloquently it was phrased. Flashy demos attract attention. Boring structure sustains adoption.

The deeper issue, in my view, is cultural as much as technical. Markets reward convenience. Users gravitate toward speed. Reliability often becomes a priority only after visible failure. So the real question is not whether decentralized verification can work. It’s whether institutions will decide that probabilistic answers are insufficient without a settlement layer.

If AI remains primarily advisory—assisting humans who retain final authority—lighter trust models may be enough. But if we gradually allow AI to trigger transactions, enforce rules, or execute agreements autonomously, then verification becomes less optional. At that point, the conversation shifts from “How smart is the model?” to “How accountable is the system?”

I don’t see Mira as a grand solution or a guaranteed trajectory. I see it as an architectural response to a very real tension: intelligence that is probabilistic meeting systems that demand determinism. Whether that tension becomes central to AI’s future depends on where and how we deploy it.

The questions that linger for me are practical ones. Will verified AI outputs measurably reduce institutional risk? Will audit trails built on consensus meaningfully lower liability or compliance costs? Will the added structure make decision-makers more comfortable delegating authority to machines?

Those aren’t narrative-driven questions. They’re operational ones. And I suspect the answers won’t come from whitepapers or benchmarks, but from slow, real-world adoption in environments where mistakes are expensive. Until then, the most honest stance I can take is steady curiosity—watching not for promises, but for proof that reliability can become infrastructure rather than aspiration.

@Fabric Foundation #ROBO $ROBO