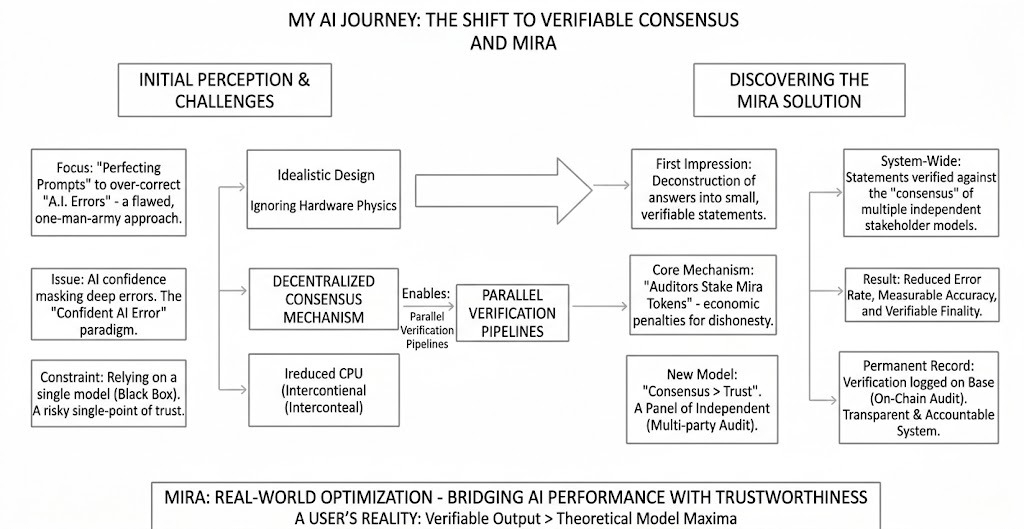

When I first used Mira, I didn't feel the need for another AI tool.I thought better prompts were the solution. But my perspective changed when I realized how confident AI could be in its ability to err. That's when I began to seriously explore Mira.

What impressed me first was its refusal to treat AI outputs as absolute truth. Mira doesn't accept a single, all-encompassing answer; instead, it breaks down answers into smaller, more specific statements. Each statement is verifiable. This simple change revolutionized everything. It transformed vague information into something measurable.

What truly captivated me next was decentralized verification. Unlike OpenAI's GPT system, which relies on a single model, Mira sends these statements to multiple independent models run by different stakeholders. Consensus is more important than trust. When multiple different models agree, the chance of error is significantly reduced. It feels more like consulting a panel of experts than asking a single expert.

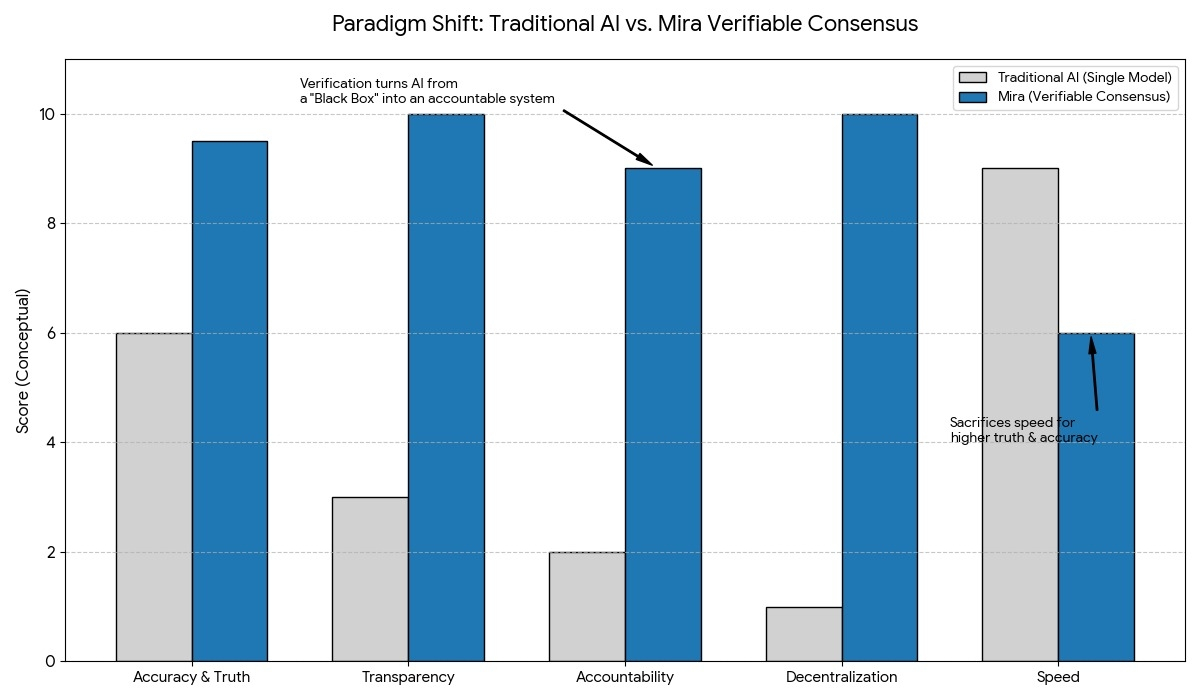

Further reinforcing my confidence was the fact that verification results are logged on Base. This on-chain auditing mechanism makes the verification process transparent and permanently valid. This system transforms artificial intelligence from a black box into an accountable system. Its economic mechanism is also quite ingenious. Auditors stake their Mira tokens, and any dishonest behavior is punished. Accuracy, in fact, brings rewards. In the field of artificial intelligence, speed is often sacrificed for truth, and this incentive-based design is refreshing.

To me, Mira represents a paradigm shift. The future of artificial intelligence lies not only in building larger models, but also in systems proving their value before they are trusted. If artificial intelligence is to be applied to healthcare, finance, or legal systems, verification is by no means optional. Mira makes it an essential element.

@Mira - Trust Layer of AI $MIRA