There was a moment when I was moving through a few on chain actions during a period of noticeably higher activity, and everything looked normal on the surface, but the timing felt slightly off. Nothing failed, nothing stuck, but the confirmation of one simple action took longer than I expected. I remember just waiting without doing anything else, not because I was worried, but because I was trying to understand whether the delay meant something or if it was just system load.

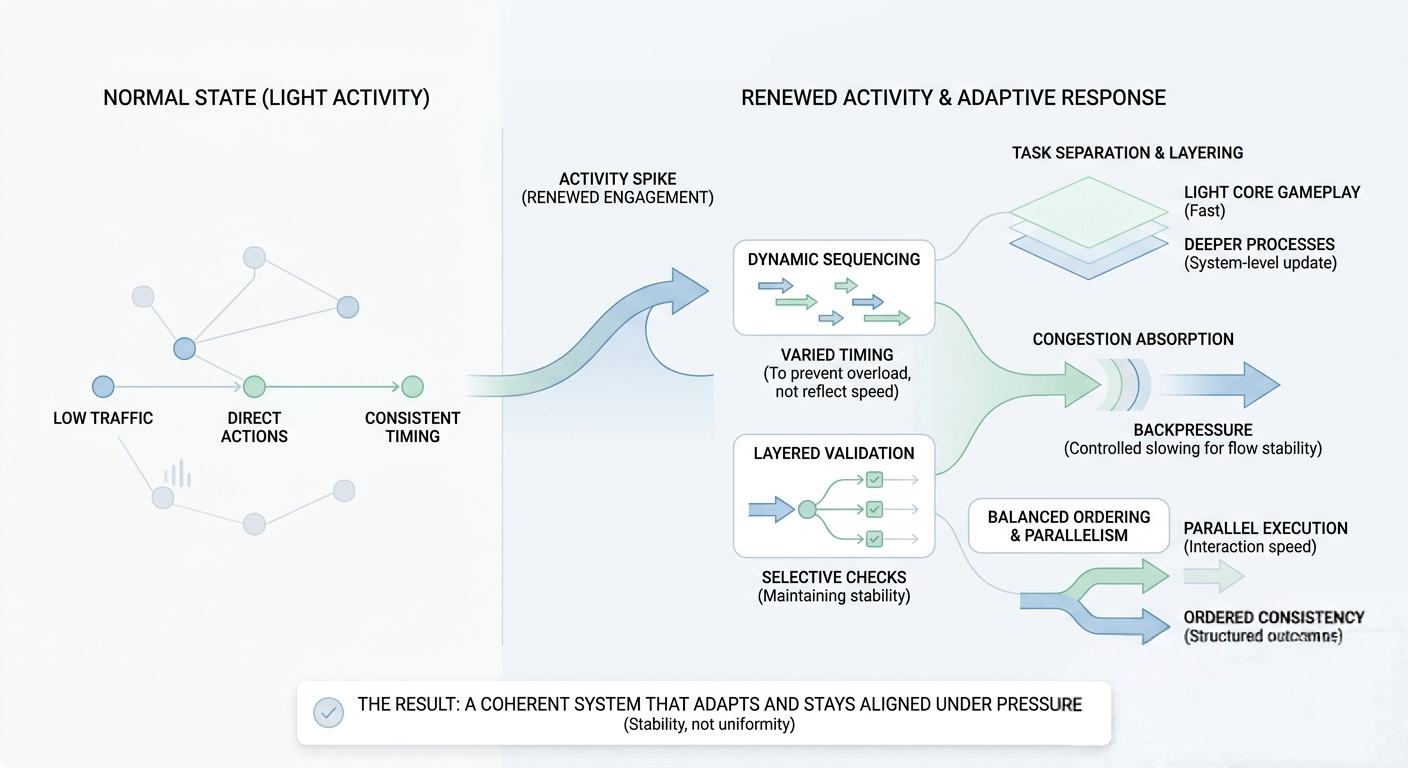

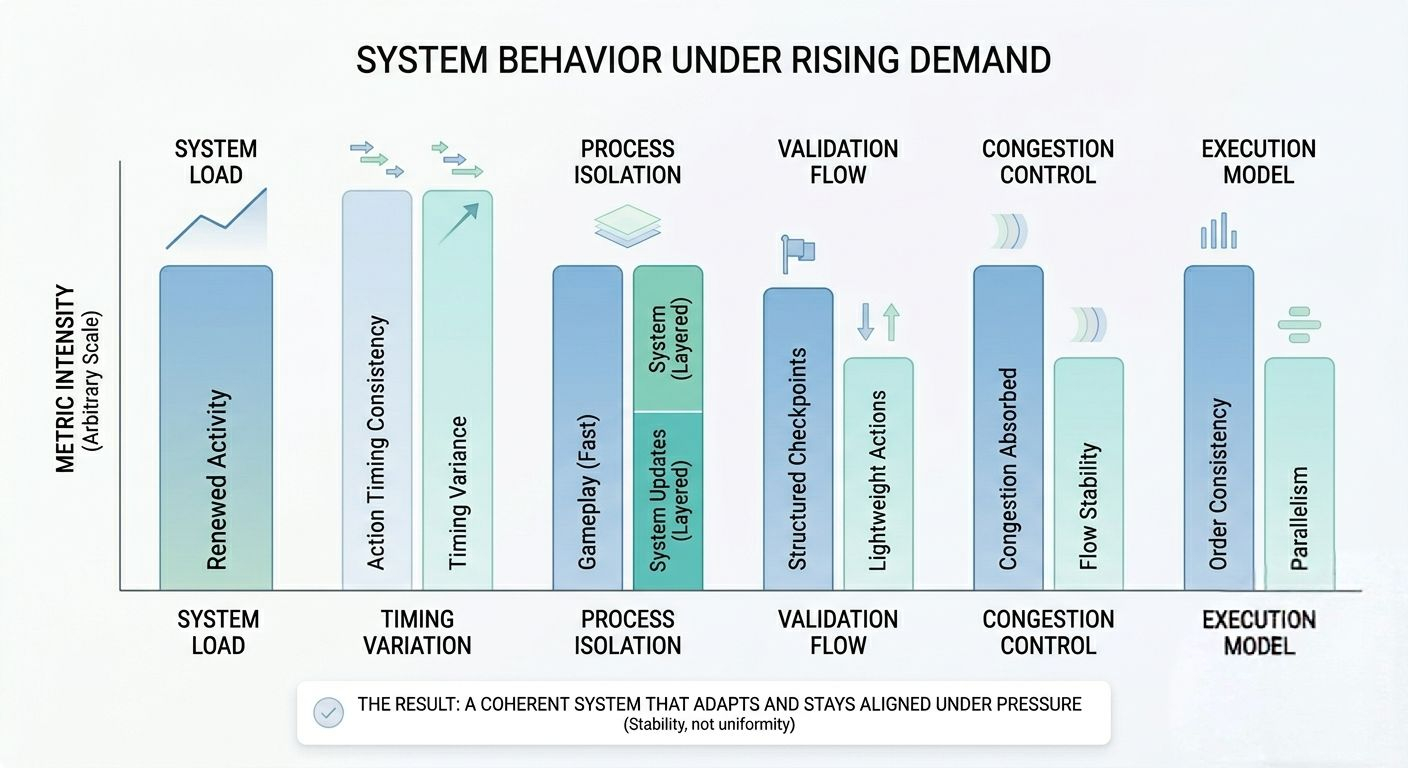

After seeing this happen a few times, what I noticed is that crypto systems rarely change in obvious ways. There is no clear “on” or “off” moment. Instead, what shifts is how the system behaves under pressure. When activity increases, everything still works, but the rhythm changes. Some actions feel immediate, others feel spaced out, and from the outside it can look like inconsistency even when it is actually controlled adjustment.

From a system perspective, this is where real infrastructure behavior becomes visible. Every interaction is part of a shared environment where actions are not isolated they are competing for processing, validation, and ordering. When demand rises, the system doesn’t stop functioning. It starts reorganizing how flow is distributed so it doesn’t collapse under concentration.

I often think about it like a harbor. When traffic is light, ships dock and leave without delay. But when multiple ships arrive at the same time, the harbor doesn’t panic. It starts assigning priority, adjusting timing, and managing movement in a way that keeps everything from blocking each other. The experience becomes less uniform, but more stable overall.

When I look at how @Pixels approaches this, what caught my attention is not just the visible gameplay layer, but how the system seems to behave during moments of renewed activity. It doesn’t feel like a simple content update sitting on top of a fixed structure. It feels more like a system that becomes active in response to participation itself.

What interests me more is how that activity reveals the underlying design choices.

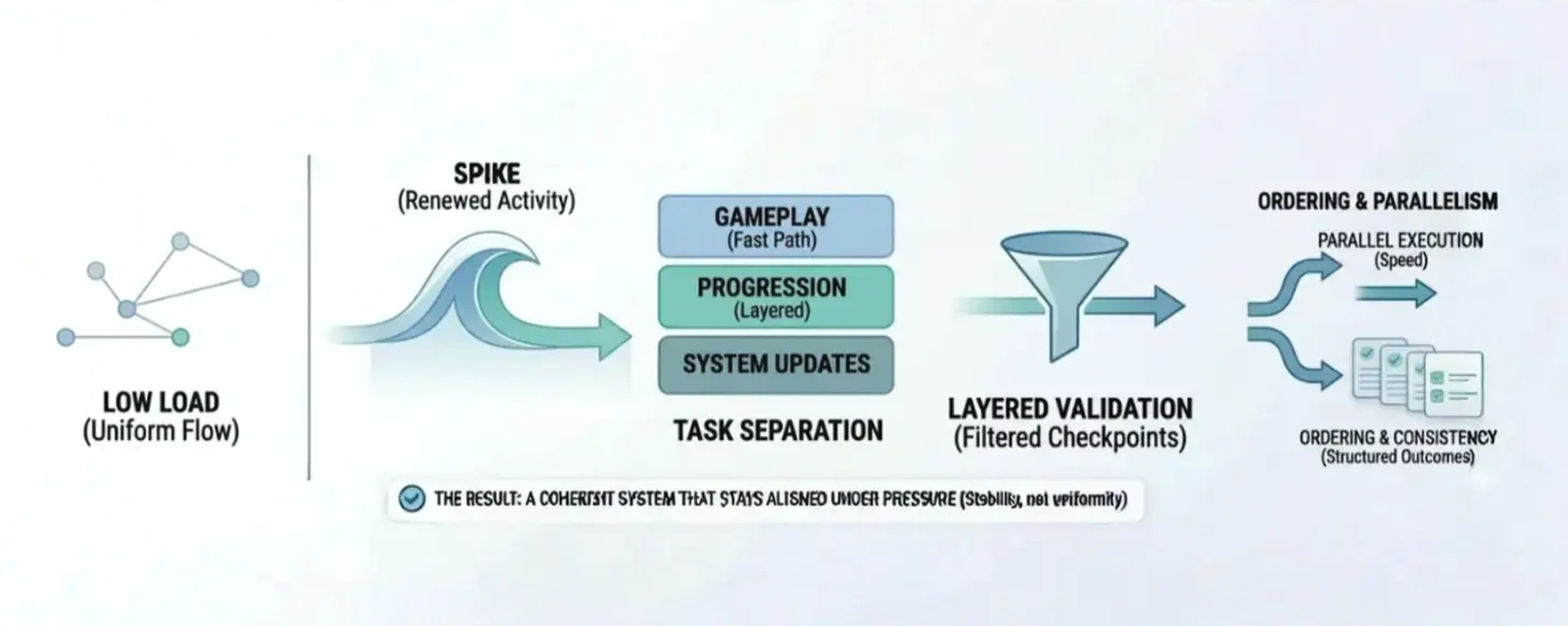

Scheduling feels less rigid than it appears at first. Some actions move instantly, while others feel slightly delayed. It doesn’t come across as random. It feels like timing is being used as a tool to prevent overload rather than just reflecting execution speed.

Task separation is another thing I keep noticing. The core gameplay loop stays light even when activity increases, while deeper processes like progression or system-level updates seem to operate in separate layers. From a system perspective, that separation is what prevents everything from slowing down at once.

Verification flow also becomes more noticeable during active periods. In my experience watching systems scale, not all actions are processed equally. Some are lightweight and fast, while others require more structured validation. That layered approach helps maintain stability without forcing uniform delay across the system.

Then there is congestion control. What matters in practice is not preventing load, but absorbing it without breaking flow. Backpressure is part of that quiet mechanism slowing certain paths just enough so the system doesn’t become overwhelmed while keeping movement alive.

Worker scaling and workload distribution only matter when they actually reduce concentration. If everything still passes through a narrow path, adding capacity doesn’t solve much. What makes a difference is how evenly the system spreads activity when demand rises.

And then there is the balance between ordering and parallelism. Parallel execution keeps interaction responsive, especially in gameplay, but ordering is still necessary for maintaining consistency in structured outcomes. The challenge is allowing both to exist without interfering with each other.

What stood out to me during renewed activity is that Pixel doesn’t feel like it simply reacts to events. It feels like the system subtly adjusts itself based on how people are engaging at that moment. The experience remains simple on the surface, but underneath there is constant rebalancing happening in timing and flow.

From a broader view, this is what makes systems like this interesting. Not how they behave when things are quiet, but how they adapt when participation returns and pressure rises again.

A reliable system is not the one that feels perfectly smooth at all times, but the one that stays coherent when conditions change. Good infrastructure doesn’t draw attention to its adjustments. It simply keeps everything aligned, even when activity comes in waves.