Every now and then a Fabric project comes back to my mind, not because it is everywhere online, but because something about it feels unresolved. Fabric Protocol is one of those ideas for me. It is not the loudest project in crypto or AI. It does not constantly appear in conversations. But the concept behind it sits in a strange place in my thoughts, like a question I have not fully answered yet.

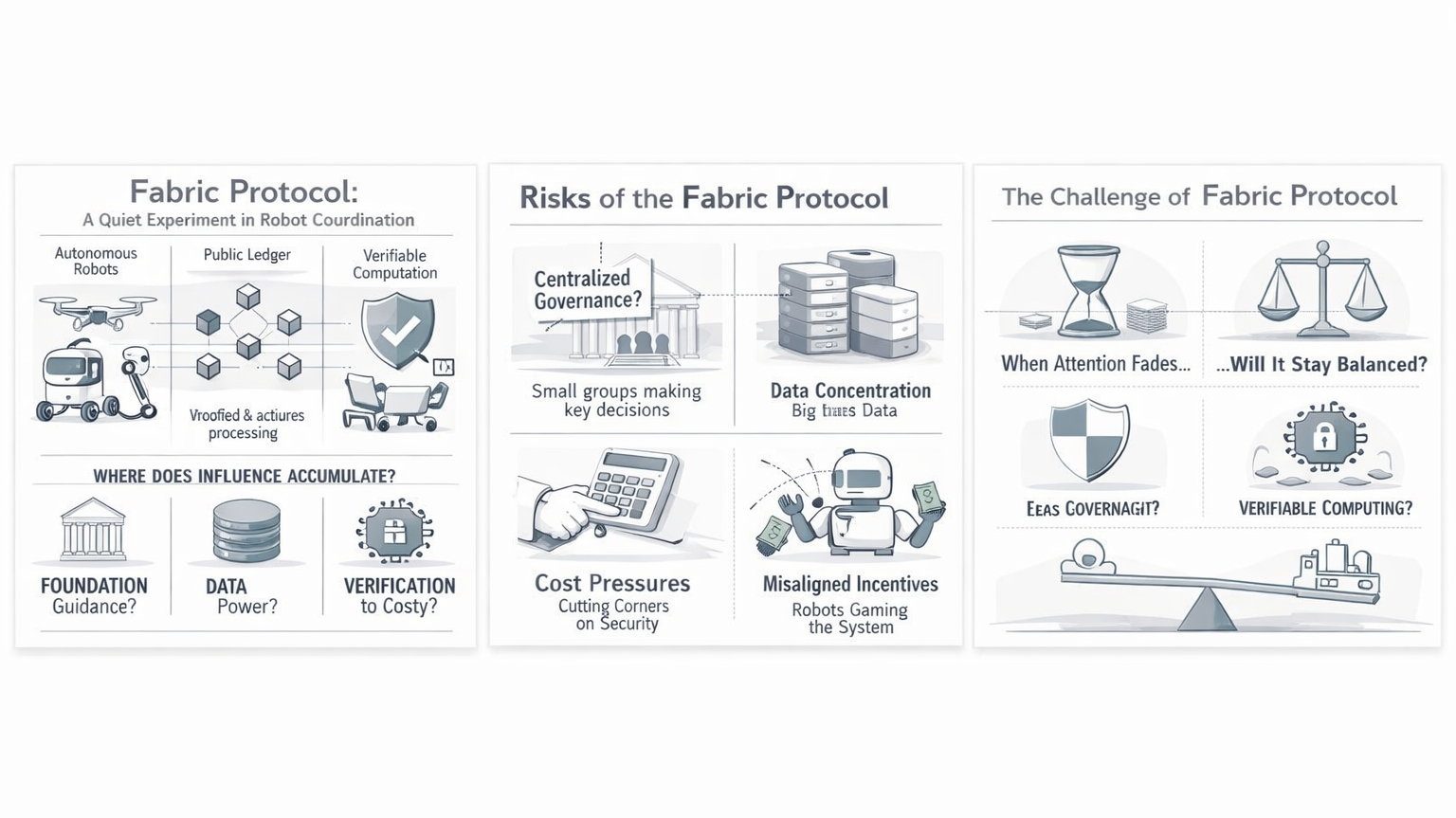

Fabric describes itself as an open network for coordinating robots and intelligent machines. The idea is that robots, AI agents, and human systems could interact through a shared infrastructure where actions, data, and computation are verified through cryptography and recorded on a public ledger. Instead of trusting a company or a central authority, the system itself would provide the proof that something happened correctly.

At first, the idea seems almost obvious. If robots and autonomous machines become more common in the real world, they will probably need some shared coordination layer. Machines will collect data, perform tasks, request services, and interact with other machines. A neutral infrastructure that organizes all of this sounds reasonable.

But when I think about it for longer, I start wondering whether the problem is actually technical or whether it is mostly human.

Fabric suggests that verification and transparency can help build trust between machines and organizations. In theory, a robot could log what it does, prove its computations, and record that information on a public system where anyone can check it. That sounds powerful. But transparency alone does not always lead to fairness.

A system can be completely transparent and still slowly concentrate influence in the hands of a few participants.

Fabric is supported by a foundation, which is common for crypto infrastructure. Foundations usually start with a careful role. They guide development, protect the project’s mission, and support the community around it. In the beginning this kind of structure can help a project grow without being controlled by a single company.

But I often wonder how these structures evolve over time.

Foundations are meant to be neutral stewards, but they inevitably become reference points. People look to them for guidance when decisions are unclear. They help coordinate upgrades, interpret governance outcomes, and maintain the direction of the project. None of this is necessarily harmful. In fact, some coordination is necessary.

Yet over time that coordination can slowly become influence.

The system may still look decentralized from the outside, but in practice many decisions start orbiting around the same small group of people who understand the system best.

Another thing that makes Fabric interesting is the type of information that robots generate. Robots do not just move objects or complete tasks. They constantly produce data about the physical world. Sensors collect information about environments, movements, and patterns of activity.

If a network like Fabric ever connects large numbers of machines, the data flowing through it could become extremely valuable.

The protocol might aim to distribute that value across the network, but large organizations usually have advantages when it comes to processing and analyzing information at scale. Over time it seems possible that companies operating large fleets of robots could quietly gain more influence simply because they contribute more data and have the resources to use it effectively.

In that situation the network could remain technically open while practical influence slowly gathers around a few powerful participants.

There is also the question of verification itself. Fabric talks about verifiable computation, which means actions and calculations can be proven correct using cryptographic proofs. It is an elegant idea. But proofs require computing power, time, and resources.

If the network grows large, those verification costs might become significant.

When systems become expensive to operate, participants usually search for ways to reduce the cost. Sometimes that means simplifying processes or moving parts of the system outside the main verification layer. At first those changes may seem harmless.

But over time the system could start relying more on trust again, just in different places.

The ledger might still exist and record events, but the real decision-making could slowly move into off-chain coordination, private infrastructure, or organizational agreements that are not as visible.

Another layer of complexity comes from the fact that robots operate in the physical world. Digital systems are easier to verify because everything happens inside software. Robots interact with messy environments where sensors can fail, machines can break, and unexpected situations happen constantly.

If a robot performs a task incorrectly, it may not always be clear why.

Was it a hardware failure? A software bug? A mistake by the operator who deployed it? Or something that the protocol itself encouraged through its incentive structure?

These kinds of questions are difficult to resolve automatically. At some point human judgment becomes necessary.

That is where governance enters the picture. A network like Fabric would eventually need ways to handle disputes, define rules, and update the system. Early governance might involve open participation from the community. But as systems become more complex, decisions often move toward smaller groups who have the expertise to interpret technical and operational issues.

Over time those groups can become informal centers of authority.

This shift does not necessarily happen because anyone intends to centralize power. It often happens simply because complexity makes wide participation difficult.

Another thing that keeps me thinking about Fabric is the economic layer. The protocol seems to imagine a world where machines and AI agents can participate in an open economy of services. Robots could request resources, offer capabilities, and coordinate tasks automatically.

It sounds efficient, but economic systems rarely behave exactly as designers expect.

Participants usually discover strategies that maximize their rewards within the rules of the system. Sometimes those strategies align with useful outcomes. Other times they create strange behaviors that technically follow the rules while quietly draining value from the network.

If machines are optimizing for rewards defined by a protocol, even small misalignments in incentives could scale quickly.

A robot might perform tasks that maximize protocol rewards but do not actually contribute meaningful value to the real world. Over time the network might need to adjust its rules to discourage those behaviors.

But changing rules can also introduce new complications.

Every adjustment shifts incentives slightly. Some participants benefit while others lose advantages they previously relied on. Governance decisions start to carry economic consequences, which can make them more political than technical.

I also wonder about the role of convenience.

Projects often begin with strong commitments to principles like verification, transparency, and decentralization. But when real users start interacting with the system, they naturally want things to be faster, cheaper, and easier to use.

Small compromises begin to appear.

Maybe verification is reduced for certain operations. Maybe some processes move off-chain to improve efficiency. Maybe trusted operators are allowed to perform tasks that were originally meant to be fully decentralized.

Each change seems reasonable on its own. But over time the system can drift away from its original philosophy without any single moment where the change feels dramatic.

Fabric sits in an interesting place because it touches several powerful trends at once. Robotics is advancing quickly. AI systems are becoming more capable. Decentralized infrastructure continues experimenting with new forms of coordination.

Bringing these ideas together could eventually produce systems that are very different from the digital networks we are used to.

But complexity also means uncertainty.

The more moving parts a system has, the more difficult it becomes to predict how incentives and behaviors will evolve.

What keeps Fabric in my thoughts is not the promise that it will succeed. It is the possibility that something like it might quietly become part of the infrastructure connecting machines and intelligent systems.

If that happens, its most important effects might appear slowly, long after the moment when people are actively discussing it.

The real question for me is not whether the system works when everyone is paying attention to it. Many systems work under those conditions.

The question that lingers is what happens later, when attention fades, when incentives become uncomfortable, and when participants start pushing the boundaries of the rules.

I cannot say with confidence how Fabric would behave in that environment.

And maybe that uncertainty is exactly what makes the idea difficult to ignore.