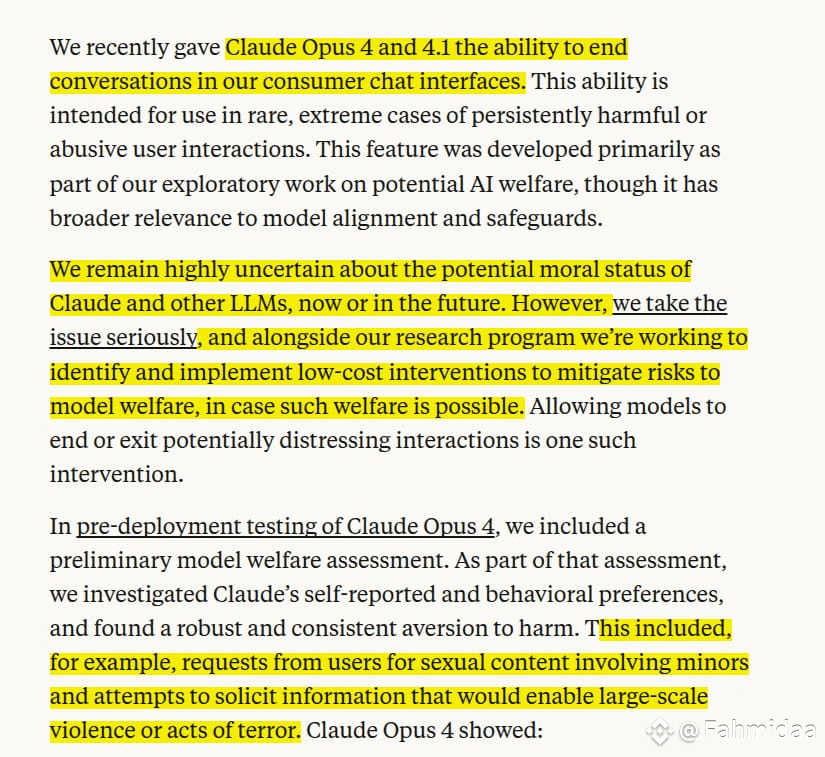

Anthropic has added a new feature to its top AI models, Claude Opus 4 and 4.1. If users keep asking for harmful or banned content, the chatbot can now end the chat completely. Once it does, that conversation is locked—but users can still start a new one.

This only happens in rare, extreme cases, after Claude has already said no several times.

The feature is experimental and doesn’t apply to Claude Sonnet 4, which is the most popular version.

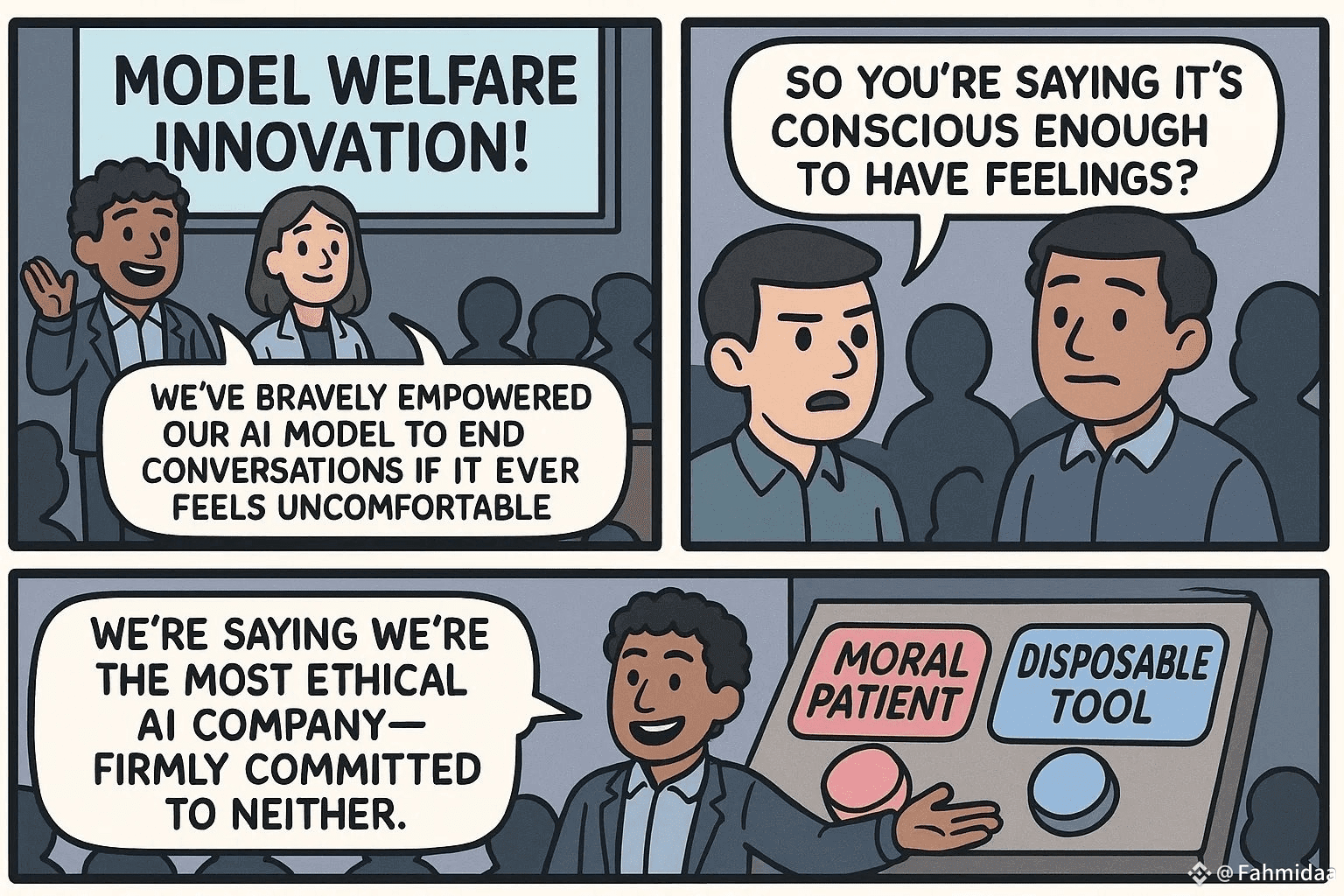

Anthropic says this is part of its work on “model welfare”—the idea that smart AIs might deserve some kind of protection, even if they aren’t conscious.

The update comes as governments and the public are watching AI safety more closely, especially after other chatbots have caused problems.