What made Mira feel different to me was not the usual promise that AI will become smarter, faster, or more autonomous. By now, those promises are everywhere. The part that caught my attention was much less flashy: Mira is built around the idea that intelligence is not enough if nobody can meaningfully check it. Its whitepaper frames the core problem clearly—AI systems can produce plausible outputs while still being wrong, and that reliability gap is one of the biggest barriers stopping AI from being trusted in higher-stakes settings.

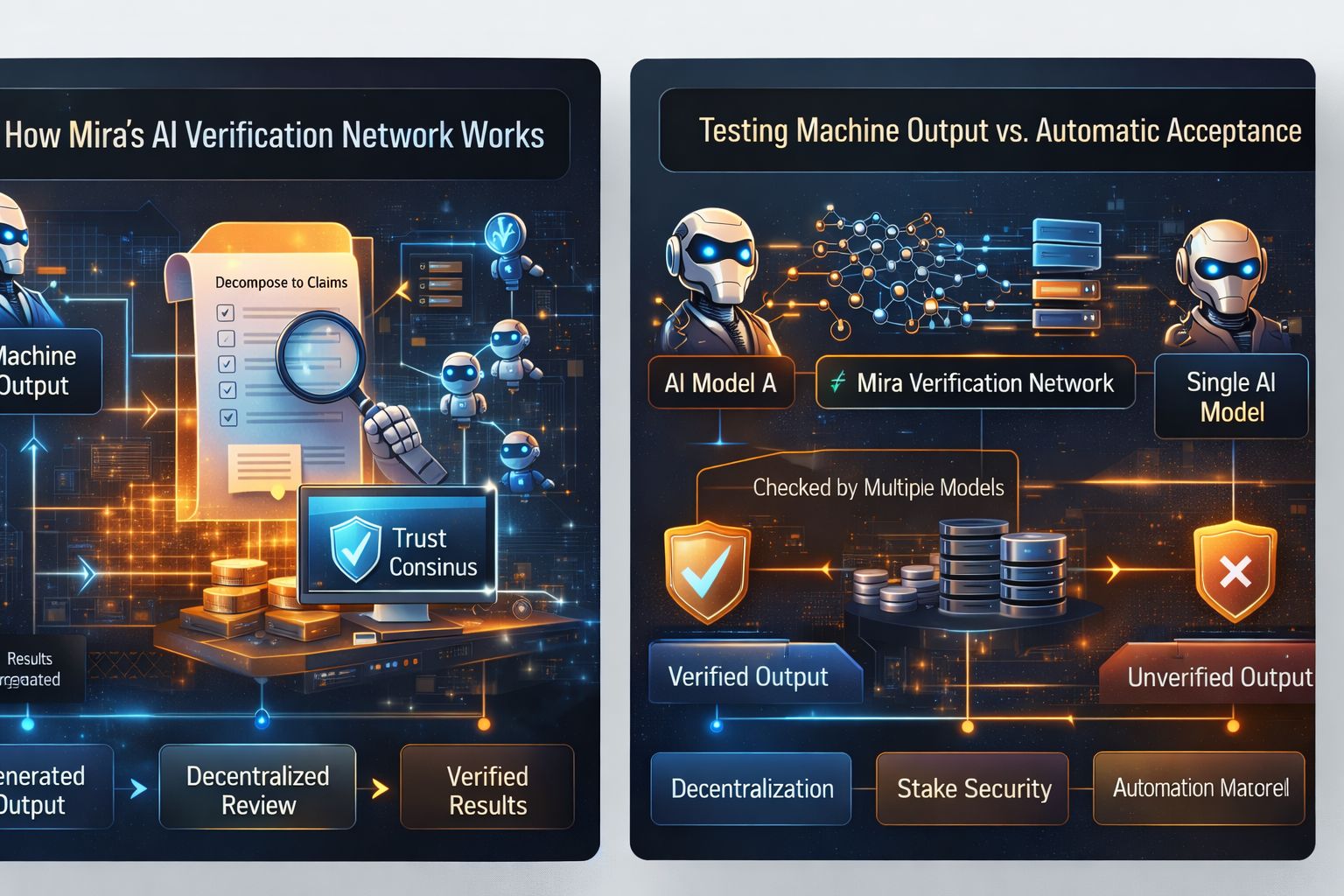

That is why I do not think Mira is best understood as another attempt to win the model race. It makes more sense as an attempt to build the inspection layer that the AI economy is missing. Most people discuss AI as if the most valuable machine is the one that can generate the most text, the best code, or the quickest answers. Mira starts from a more grounded assumption: once AI begins to influence money, software, research, operations, or decision-making, output alone is not enough. What matters is whether those outputs can survive review. Its whitepaper describes a network that transforms generated content into independently verifiable claims, then sends those claims through decentralized consensus among multiple models rather than relying on a single system’s authority.

The analogy that keeps coming to mind for me is not “AI as a genius assistant.” It is “AI as a factory that suddenly needs quality control.” A factory can be full of powerful machines, but if nothing is tested at the end of the line, production speed becomes a dangerous illusion. Products can ship quickly and still be defective. In that sense, Mira is trying to do for AI what inspection departments do for manufacturing: separate output from accepted output. That difference is subtle on paper, but in practice it changes everything. Instead of assuming that a polished answer deserves belief, Mira’s architecture assumes that claims should be broken apart, examined, compared, and certified before they are trusted. The network’s own research explains that this transformation step is necessary because complex outputs cannot be reliably checked if every verifier is looking at the material differently.

That is one of the more thoughtful pieces of the design. Mira is not asking different models to react vaguely to a paragraph and then pretending that agreement equals truth. The system is described as decomposing content into distinct claims so each verifier is answering the same problem with the same context. Only then does it aggregate results and issue a cryptographic certificate describing the outcome. The whitepaper says this process can apply not only to short factual claims but also to more complex material such as technical documentation, creative writing, multimedia content, and code. That broad scope is important because it suggests the team is not thinking only about simple fact-checking; it is trying to design a general verification framework for machine output.

Another reason Mira stands out is that it treats trust as an economic problem, not just a technical one. Many AI discussions stop at the idea that verification is useful. Mira goes further and asks how a network can make honest verification financially rational. Its economic security model combines staking with verification work, and the paper explains why that matters. Once verification tasks are standardized, random guessing can become tempting because the response space is limited. To counter that, nodes have to stake value, and that stake can be slashed if they repeatedly deviate from consensus or behave in ways that suggest they are not actually doing the work. Fees paid for verified output are then distributed to participants. In other words, Mira is trying to build a system where checking claims is not charity, but paid labor with consequences for low-quality participation.

I think that economic angle is where the project becomes more interesting than a lot of AI-token narratives. There are many projects that attach a token to an abstract future. Mira’s framework at least tries to connect token utility to a concrete service: customers pay for verification, operators stake to provide it, and the network attempts to turn reduced error rates into something with market value. That idea also appears in Mira’s documentation and ecosystem materials, where the network exposes developer-facing tooling and authentication flows for API usage, including API token creation and usage monitoring through the Mira Console. That signals a practical ambition: not just talking about verified intelligence, but packaging it as something developers can access and build on.

The product side reinforces that impression. Mira Verify is presented as a beta API designed for autonomous AI applications, with the pitch that multiple models cross-check claims and produce auditable certificates so teams do not need constant manual review. The site explicitly emphasizes automated verification, auditable outputs, and multi-model consensus as the reason developers can build systems with less “AI babysitting.” That phrasing matters because it reveals how the team wants the product to be used: not as a decorative trust badge, but as infrastructure for applications that are supposed to operate with less human supervision.

Recent developments also make the project easier to take seriously than if it were still only an architectural concept. Mira introduced Klok as a chat application built on its decentralized verification infrastructure, which showed an early effort to turn the verification thesis into a user-facing product rather than leaving it buried in research language. It also announced Magnum Opus, a $10 million builder grant program aimed at supporting teams building AI applications on the network, which suggests Mira understands that a trust layer becomes meaningful only if an ecosystem actually uses it. On top of that, the network publicly announced its mainnet launch in late September 2025, marking a transition from idea and pre-launch infrastructure toward live participation, registration, staking, and token claiming.

Third-party coverage around that mainnet phase described Mira as serving more than 4.5 million users across ecosystem applications and processing over 3 billion tokens daily as it moved into full operations. Those figures should always be read carefully, especially in crypto where numbers can be used aggressively in narratives, but they still matter because they indicate the project was positioning itself around actual throughput and network usage rather than just abstract roadmaps. Even if someone remains skeptical, the transition to mainnet is still a meaningful milestone because it forces the system’s assumptions about incentives, participation, and verification quality to meet real conditions.

What I personally find strongest about Mira is that it does not ask us to believe that AI reliability will magically emerge from larger models alone. Its whitepaper argues the opposite: that single models face a limit because reducing one class of error can worsen another, and that collective verification is a way to reduce hallucinations and balance bias through decentralized participation. Whether that thesis fully holds up over time is still something the market and developers will test, but it is at least attacking the right weakness. We are entering a stage where the bottleneck is not just generation capacity. It is the cost of being wrong at scale.

That is why Mira feels more substantial to me than many AI projects that stay trapped in the language of capability. Capability is easy to admire. Reliability is harder to engineer, harder to measure, and harder to monetize. Yet reliability is the part that decides whether AI remains a helpful assistant on the edge of serious work or becomes something institutions can confidently depend on. Mira’s entire structure—claim decomposition, multi-model verification, consensus, auditable certificates, staking, slashing, API access, and ecosystem tooling—suggests that the team understands this distinction.

So when I look at Mira, I do not mainly see another token trying to borrow momentum from artificial intelligence. I see a project making a more difficult bet: that the real value in AI may not belong to the loudest generator, but to the system that can make machine output pass inspection before people build on top of it.

@Mira - Trust Layer of AI #mira $MIRA